Happy to announce that the paper introducing our Misinformation Game simulator (led by the amazing Lucy Butler) is out in Behavior Research Methods today: https://rdcu.be/dgCo6

It's a social-media simulation for experimental research. Full experimental control, open source, Qualtrics integration, no coding skills required.

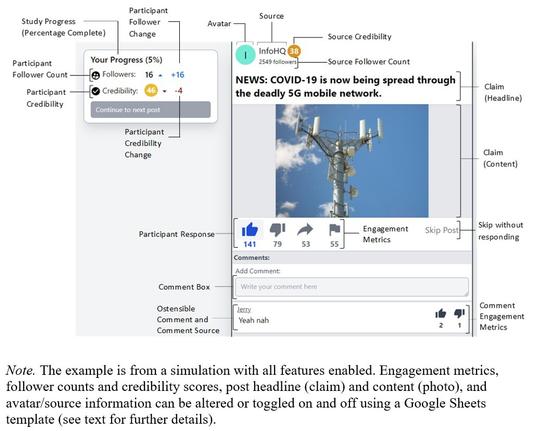

Posts can be text, image (incl. gif) or both. You can choose between a feed mode or page-wise presentation.

You can edit source handles and avatars, as well as engagement metrics (e.g. number of likes). There's a source-credibility badge and follower counts. Source-post assignments can range from fully random to fully determined.

Participants can like, dislike, share, or flag content, and they can comment. Their credibility score and follower count changes dynamically based on the choices they make. And all these features can easily be switched on or off.

Note that posts *can* be classified as true vs. false but they don't have to be. We developed the tool with misinformation experiments in mind but it can be used for many other purposes. We hope it'll be useful!

Full access here: https://misinfogame.com