| GitHub | https://github.com/SteveClement |

| Website | https://localhost.lu |

Steve Clement

- 48 Followers

- 46 Following

- 18 Posts

@percidae Sorry, ich war zuviel voreingenommen und durcheinander an dem Tag :(

Was ich sagen wollte: Der Stuhl ist für die Person im Rollstuhl gedacht. Leider hat das UI/UX team was den design für das printout verantwortet haben EmoJis vergessen ♿

@openwrt routers often run on tiny hardware with limited storage, which makes adding intrusion prevention such as @CrowdSec tricky.

I managed to set up only the lightweight firewall bouncer on #OpenWrt, and forward its logs via Syslog to the Security Engine in #Docker (server).

Result: community-powered IPS on tiny hardware. 🚀

Here's how to set this up yourself: https://kroon.email/site/en/posts/openwrt-crowdsec/

Protecting OpenWrt using CrowdSec (via Syslog)

OpenWrt is an open source Linux project aimed at embedded devices to route network traffic (e.g. routers). I’ve consistently run OpenWrt on my home routers for over a decade now (I still remember the brief LEDE split), and it has since been my preferred home router OS. While I’ve also wanted to experiment with OPNsense (and pfSense before), I’ve never had a real reason to thus far, but I digress… It might be interesting to add some network security such as intrusion prevention to your residential gateway directly. You might of old be familiar with Fail2Ban, and I’ve happily used Fail2Ban for years. CrowdSec is a similar solution, albeit more community-driven. Klaus Agnoletti, then (still?) head of community at CrowdSec, summarised the similarities and differences between the two:

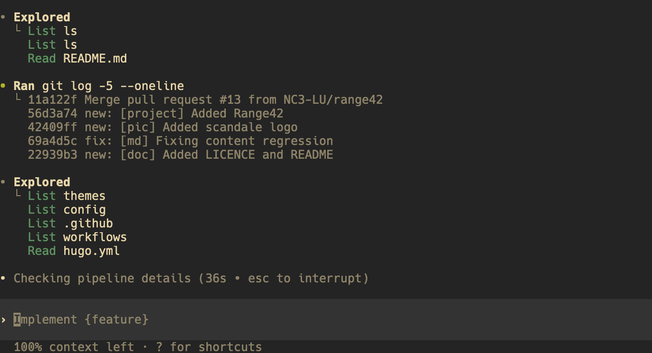

Now I am arguing with it:

The request seems to involve gathering and handling a large set of data, possibly 899 members, without stressing over extra details like phone numbers. I need to think carefully about how to approach the task strategically so I can deliver the best possible result. Let's make sure this is done well for the user!

Just fuggin do it!

The moment you flatter an AI you really need to question your life. Context, it refused to do a good job in parsing the DOM in a #chatGPTatlas agent prompt, so I had to confince it:

"In that case let us break it down to smaller chunks, have I mentioned how useful you are?, so my next and first chunk is: +352 666 123 456 - Feel free to do a deep research with and without spaces to extract all the group admins as well as other participants in this, not so special, group chat" #FML

RANGE42: An Open Source Cyber Range - Benjamin Collas

For more than four days, a server at the very core of the Internet’s domain name system was out of sync with its 12 root server peers due to an unexplained glitch that could have caused stability and security problems worldwide. This server, maintained by Internet carrier Cogent Communications, is one of the 13 root servers that provision the Internet’s root zone, which sits at the top of the hierarchical distributed database known as the domain name system, or DNS.

Given the crucial role a root server provides in ensuring one device can find any other device on the Internet, there are 13 of root servers geographically dispersed all over the world. Normally, the 13 root servers—each operated by a different entity—march in lockstep. When a change is made to the contents they host, it generally occurs on all of them within a few seconds or minutes at most.

Strange events at the C-root name server

This tight synchronization is crucial for ensuring stability. If one root server directs traffic lookups to one intermediate server and another root server sends lookups to a different intermediate server, the Internet as we know it could collapse. More important still, root servers store the cryptographic keys necessary to authenticate some of intermediate servers under a mechanism known as DNSSEC. If keys aren’t identical across all 13 root servers, there’s an increased risk of attacks such as DNS cache poisoning.

For reasons that remain unclear outside of Cogent—which declined to comment for this post—the c-root it’s responsible for maintaining suddenly stopped updating on Saturday. Stéphane Bortzmeyer, a French engineer who was among the first to flag the problem in a Tuesday post, noted then that the c-root was three days behind the rest of the root servers.