This isn't just about AI, and I hope my friends who run their own experiments with local LLMs and who use chatbots to as a sounding board rather than as an obsequious servant or unpaid robotic coding intern understand I'm not talking about them.

We (the US) have a president who wants all the respect of the position without doing the work of being a national executive or showing competence and vision in leadership, a Secretary of Defense trolled into a half-assed, doomed, failure of an intervention, a HHS chief and all his giblet-brained underlings that fancy themselves health professionals armed with homeopathic levels of ability and overinflated delusions of adequacy. Don't forget Brilliant Auto Business Genius whose flagship project is a low-poly asset rejected concept art for 1997's "Carmageddon" that has sales worse than the Edsel. Every C-suite malingerer whose primary competencies are being tall, white, male, and credulously overconfident who wants all the monies but doesn't want to have employees or accountability or a product or service anyone wants or needs. "Gig" employers that cosplay as banks, hotels, taxi services, delivery services. Web search engines that spew randomized text rather than links to authoritative and correct information sources.

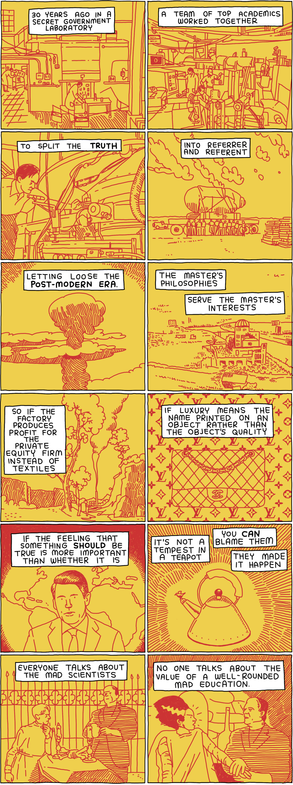

Atlas shrugged then laid off everyone who knew what they were doing because his best friend ChatGPT told him to. The same societal endgame but there is no Galt's Gulch full of Libertarian Übermensch, just hundreds of thousands of idled professionals helplessly watching toddlers "driving" fire trucks, "flying" planes, "writing" software, "creating" art, etc. A societal disaster, a complete civilizational self-own, promulgated by modern day tulip speculators and assorted fascist-adjacent financiers.

I don't see any of this getting better until the adults among us pick up the toddlers, take away their toys, and put them all down for a nice long nap.