We made use of JAX-based JIT compilation at training time, which enabled us to train models on GPU, TPU or CPU. On several platforms this accelerated training by more than 3000 times, reducing training time from days to minutes.

Using differentiable computing, coupled with a hardware-aware neuron model and a model of parameter variation during training, we build applications which are robust against mismatch. For deployment we have an optimisation-based solution for parameter quantisation and mapping.

Building and deploying applications to mixed-signal neuromorphic processors has historically been a difficult task. These processors often have a high degree of complexity with a low degree of parameterisation, meaning that parameters are highly constrained across a network.

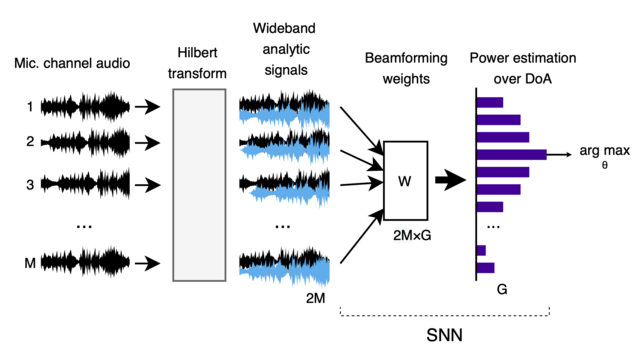

As a result, we use *much* less implementation resources for beamforming than standard approaches. Using an SNN means we are also very power efficient, while still achieving state-of-the-art accuracy for SSNs, comparable with standard super-resolution methods such as MUSIC [3]!

By using the Hilbert Transform we developed a single beamforming approach that works well in the narrowband case, and can use all frequencies of a wideband signal to work well in the wideband case!

We took a different approach, designed for arrays with many microphones (>2). We start with a construct called the Hilbert Transform to estimate the phase of each signals and to encode the phases as spikes. We then use a beamforming method to estimate the source direction.

Most SNN implementations of sound source localization take this approach, using the precise differences in spike times generated by a single-frequency sound at two microphones to estimate the location of an audio source.

Mammals use the fact that audio sources from different directions lead to very precise differences in arrival time between our two ears — known as inter-aural time differences (ITDs). ITDs are encoded by the differences in neuronal spike times produced by our cochleas. [2]

We've built a new system for sound source localization, based on spiking neural networks (SNNs), that sets a new state-of-the-art for SNN implementations, is extremely power efficient, and even matches the accuracy of standard DSP-based approaches! [1]

https://arxiv.org/abs/2402.11748

Low-power SNN-based audio source localisation using a Hilbert Transform spike encoding scheme

Sound source localisation is used in many consumer devices, to isolate audio from individual speakers and reject noise. Localization is frequently accomplished by ``beamforming'', which combines phase-shifted audio streams to increase power from chosen source directions, under a known microphone array geometry. Dense band-pass filters are often needed to obtain narrowband signal components from wideband audio. These approaches achieve high accuracy, but narrowband beamforming is computationally demanding, and not ideal for low-power IoT devices. We demonstrate a novel method for sound source localisation on arbitrary microphone arrays, designed for efficient implementation in ultra-low-power spiking neural networks (SNNs). We use a Hilbert transform to avoid dense band-pass filters, and introduce a new event-based encoding method that captures the phase of the complex analytic signal. Our approach achieves state-of-the-art accuracy for SNN methods, comparable with traditional non-SNN super-resolution beamforming. We deploy our method to low-power SNN inference hardware, with much lower power consumption than super-resolution methods. We demonstrate that signal processing approaches co-designed with spiking neural network implementations can achieve much improved power efficiency. Our new Hilbert-transform-based method for beamforming can also improve the efficiency of traditional DSP-based signal processing.

Sound source localization is an important part of dealing with audio. People use it to help pay attention to someone talking to us in a noisy environment. Smart home speakers use it to identify when someone is speaking, to focus on their voice and reject the background noise.