Low-power SNN-based audio source localisation using a Hilbert Transform spike encoding scheme

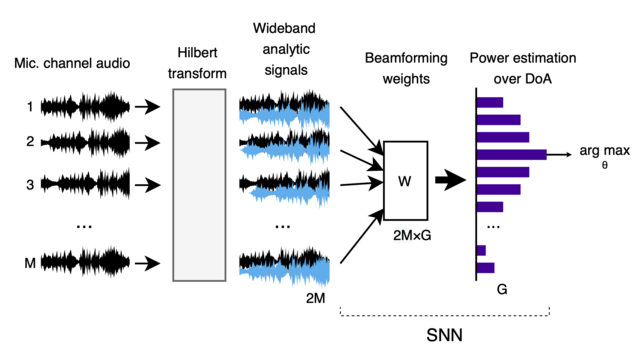

Sound source localisation is used in many consumer devices, to isolate audio from individual speakers and reject noise. Localization is frequently accomplished by ``beamforming'', which combines phase-shifted audio streams to increase power from chosen source directions, under a known microphone array geometry. Dense band-pass filters are often needed to obtain narrowband signal components from wideband audio. These approaches achieve high accuracy, but narrowband beamforming is computationally demanding, and not ideal for low-power IoT devices. We demonstrate a novel method for sound source localisation on arbitrary microphone arrays, designed for efficient implementation in ultra-low-power spiking neural networks (SNNs). We use a Hilbert transform to avoid dense band-pass filters, and introduce a new event-based encoding method that captures the phase of the complex analytic signal. Our approach achieves state-of-the-art accuracy for SNN methods, comparable with traditional non-SNN super-resolution beamforming. We deploy our method to low-power SNN inference hardware, with much lower power consumption than super-resolution methods. We demonstrate that signal processing approaches co-designed with spiking neural network implementations can achieve much improved power efficiency. Our new Hilbert-transform-based method for beamforming can also improve the efficiency of traditional DSP-based signal processing.

The major benefit of our approach is that it works for *any* signal, e.g. wideband speech, and not just narrowband sine waves.

Beamforming works by "steering" the microphone array towards a chosen direction, by combining the audio signal from each microphone. Usually this is done by assuming a particular frequency for the source signal (the "narrowband" regime).

If you're interested, you can read more details in our preprint on arXiv: https://arxiv.org/abs/2402.11748

And of course, our code is available open source: https://github.com/synsense/HaghighatshoarMuir2024

Low-power SNN-based audio source localisation using a Hilbert Transform spike encoding scheme

Sound source localisation is used in many consumer devices, to isolate audio from individual speakers and reject noise. Localization is frequently accomplished by ``beamforming'', which combines phase-shifted audio streams to increase power from chosen source directions, under a known microphone array geometry. Dense band-pass filters are often needed to obtain narrowband signal components from wideband audio. These approaches achieve high accuracy, but narrowband beamforming is computationally demanding, and not ideal for low-power IoT devices. We demonstrate a novel method for sound source localisation on arbitrary microphone arrays, designed for efficient implementation in ultra-low-power spiking neural networks (SNNs). We use a Hilbert transform to avoid dense band-pass filters, and introduce a new event-based encoding method that captures the phase of the complex analytic signal. Our approach achieves state-of-the-art accuracy for SNN methods, comparable with traditional non-SNN super-resolution beamforming. We deploy our method to low-power SNN inference hardware, with much lower power consumption than super-resolution methods. We demonstrate that signal processing approaches co-designed with spiking neural network implementations can achieve much improved power efficiency. Our new Hilbert-transform-based method for beamforming can also improve the efficiency of traditional DSP-based signal processing.

References

1. Haghighatshoar & Muir 2024. https://arxiv.org/abs/2402.11748

2. Jeffress 1948. https://doi.org/10.1037%2Fh0061495

3. Schmidt1986. https://doi.org/10.1109/TAP.1986.1143830

4. Pan et al. 2021. https://doi.org/10.1109/TASLP.2021.3100684

Low-power SNN-based audio source localisation using a Hilbert Transform spike encoding scheme

Sound source localisation is used in many consumer devices, to isolate audio from individual speakers and reject noise. Localization is frequently accomplished by ``beamforming'', which combines phase-shifted audio streams to increase power from chosen source directions, under a known microphone array geometry. Dense band-pass filters are often needed to obtain narrowband signal components from wideband audio. These approaches achieve high accuracy, but narrowband beamforming is computationally demanding, and not ideal for low-power IoT devices. We demonstrate a novel method for sound source localisation on arbitrary microphone arrays, designed for efficient implementation in ultra-low-power spiking neural networks (SNNs). We use a Hilbert transform to avoid dense band-pass filters, and introduce a new event-based encoding method that captures the phase of the complex analytic signal. Our approach achieves state-of-the-art accuracy for SNN methods, comparable with traditional non-SNN super-resolution beamforming. We deploy our method to low-power SNN inference hardware, with much lower power consumption than super-resolution methods. We demonstrate that signal processing approaches co-designed with spiking neural network implementations can achieve much improved power efficiency. Our new Hilbert-transform-based method for beamforming can also improve the efficiency of traditional DSP-based signal processing.