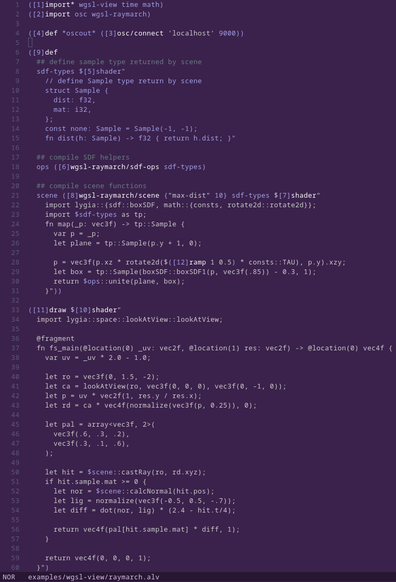

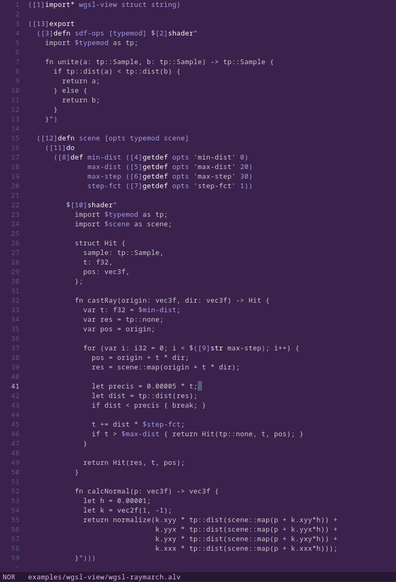

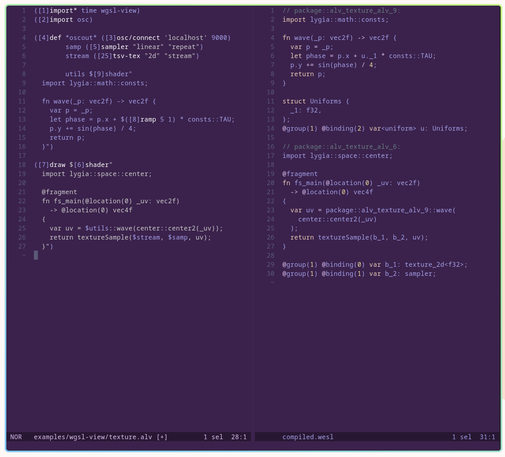

project #permathread: glsl-view, a GLSL shader host for livecoding.

EDIT: scroll down for the successor toolkit wgsl-view 😉

It's designed in tandem with #alv, but speaks generic OSC and should be easy to integrate elsewhere. The OSC interface is unopinionated and tries to expose GLSL language features as immediately as possible (within reason); there's no magic builtin uniforms or additional sampler info injected for example. So far, the only specific niche feature is loading of videos into 3d textures.

past threads:

https://merveilles.town/@s_ol/111393188626738475

https://merveilles.town/@s_ol/114162299639261929

https://merveilles.town/@s_ol/114973650565149124

s-ol (@[email protected])

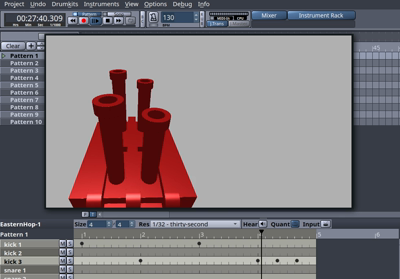

Attached: 1 video picking up alv a bit again while trying to focus on the original purpose I had in mind, mangling MIDI and OSC in real time to animate visualizations. #theWorkshop