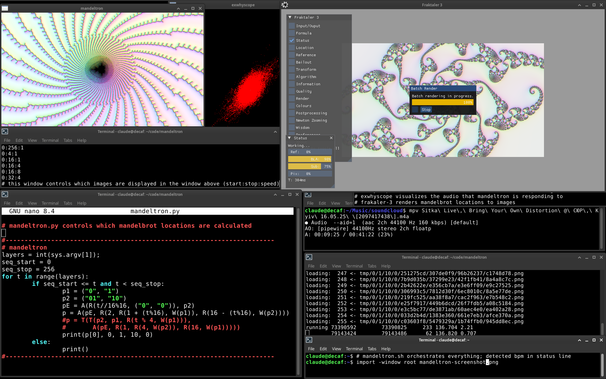

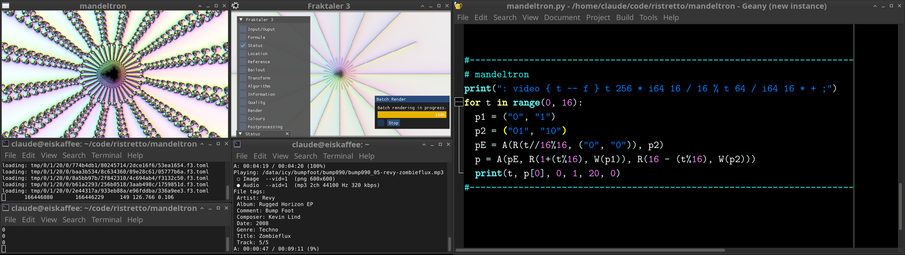

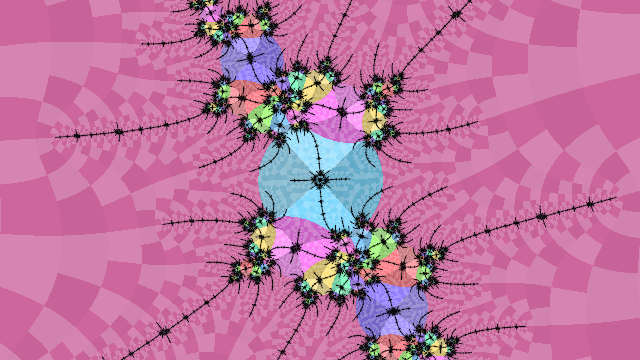

https://media.mathr.co.uk/mathr/2026-toot-media/mathr%20-%202026-05-12%20-%20mandeltron%20demo%20-%20960x600p30.mp4 960x600p30 1.6GB 45mins with sound, OBS recording of me live coding the mandelbrot set.

thoughts:

- bytebeat is not the best way to approach animation sequencing, something uzu might be more human-friendly

- the latency from thoughts to visible results is very high (minutes rather than seconds in the case of designing new fractal sequences)

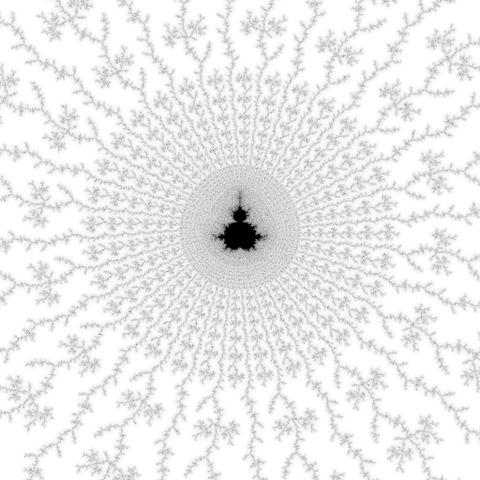

- even though I rendered the fractals at 640x360 with 4 samples/pixel, image quality seems ok

- variety is lacking, maybe i should make more colouring presets?

- want to do embedded Julia sets, but aligning them will require two addresses per location

- want to do Misiurewicz similarity loops, which will require one address but a different approach to rending animations

- want to do generalized Feigenbaum loops, might need a different rendering technique to peel off the hairs?

- all this complicated things means i might be better off interfacing the mandelbrot-numerics stuff in the language i'm using for coding the addresses (e.g. have python call out to processes instead of printing instructions for the wrapper shell script to process)

- for some reason libaubio detected tempo as 115bpm, wihch was a 3/2 factor out

- I left the mouse cursor in the middle of the screen

#Fractals #MandelbrotSet #LiveCoding