Claude mixes up who said what and that's not OK

https://dwyer.co.za/static/claude-mixes-up-who-said-what-and-thats-not-ok.html

Claude mixes up who said what and that's not OK

https://dwyer.co.za/static/claude-mixes-up-who-said-what-and-thats-not-ok.html

Everything to do with LLM prompts reminds me of people doing regexes to try and sanitise input against SQL injections a few decades ago, just papering over the flaw but without any guarantees.

It's weird seeing people just adding a few more "REALLY REALLY REALLY REALLY DON'T DO THAT" to the prompt and hoping, to me it's just an unacceptable risk, and any system using these needs to treat the entire LLM as untrusted the second you put any user input into the prompt.

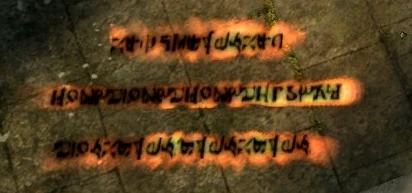

Messages are an element of gameplay in Dark Souls. They are created by using the Orange Guidance Soapstone. Messages allow players to share information with other players in other worlds. They appear on the ground as pulsating runic orange text. Standing over a message will give the option to read it. Only a few messages are viewable in an area at any given time. The number of messages viewable can be temporarily increased by using the miracle Seek Guidance. This will also reveal hidden...

But then... you'd have a programming language.

The promise is to free us from the tyranny of programming!

Maybe something more like a concordancer that provides valid or likely next phrase/prompt candidates. Think LancsBox[0].