Claude mixes up who said what and that's not OK

https://dwyer.co.za/static/claude-mixes-up-who-said-what-and-thats-not-ok.html

Claude mixes up who said what and that's not OK

https://dwyer.co.za/static/claude-mixes-up-who-said-what-and-thats-not-ok.html

Everything to do with LLM prompts reminds me of people doing regexes to try and sanitise input against SQL injections a few decades ago, just papering over the flaw but without any guarantees.

It's weird seeing people just adding a few more "REALLY REALLY REALLY REALLY DON'T DO THAT" to the prompt and hoping, to me it's just an unacceptable risk, and any system using these needs to treat the entire LLM as untrusted the second you put any user input into the prompt.

It's less about security in my view, because as you say, you'd want to ensure safety using proper sandboxing and access controls instead.

It hinders the effectiveness of the model. Or at least I'm pretty sure it getting high on its own supply is not doing it any favors.

It's both, really.

The companies selling us the service aren't saying "you should treat this LLM as a potentially hostile user on your machine and set up a new restricted account for it accordingly", they're just saying "download our app! connect it to all your stuff!" and we can't really blame ordinary users for doing that and getting into trouble.

There's a growing ecosystem of guardrailing methods, and these companies are contributing. Antrophic specifically puts in a lot of effort to better steer and characterize their models AFAIK.

I primarily use Claude via VS Code, and it defaults to asking first before taking any action.

It's simply not the wild west out here that you make it out to be, nor does it need to be. These are statistical systems, so issues cannot be fully eliminated, but they can be materially mitigated. And if they stand to provide any value, they should be.

I can appreciate being upset with marketing practices, but I don't think there's value in pretending to having taken them at face value if you didn't, and think people shouldn't.

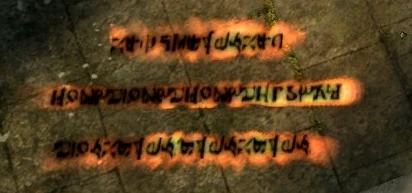

Messages are an element of gameplay in Dark Souls. They are created by using the Orange Guidance Soapstone. Messages allow players to share information with other players in other worlds. They appear on the ground as pulsating runic orange text. Standing over a message will give the option to read it. Only a few messages are viewable in an area at any given time. The number of messages viewable can be temporarily increased by using the miracle Seek Guidance. This will also reveal hidden...

But then... you'd have a programming language.

The promise is to free us from the tyranny of programming!

Maybe something more like a concordancer that provides valid or likely next phrase/prompt candidates. Think LancsBox[0].

Before 2023 I thought the way Star Trek portrayed humans fiddling with tech and not understanding any side effects was fiction.

After 2023 I realized that's exactly how it's going to turn out.

I just wish those self proclaimed AI engineers would go the extra mile and reimplement older models like RNNs, LSTMs, GRUs, DNCs and then go on to Transformers (or the Attention is all you need paper). This way they would understand much better what the limitations of the encoding tricks are, and why those side effects keep appearing.

But yeah, here we are, humans vibing with tech they don't understand.

> This class of bug seems to be in the harness, not in the model itself. It’s somehow labelling internal reasoning messages as coming from the user, which is why the model is so confident that “No, you said that.”

Are we sure about this? Accidentally mis-routing a message is one thing, but those messages also distinctly "sound" like user messages, and not something you'd read in a reasoning trace.

I'd like to know if those messages were emitted inside "thought" blocks, or if the model might actually have emitted the formatting tokens that indicate a user message. (In which case the harness bug would be why the model is allowed to emit tokens in the first place that it should only receive as inputs - but I think the larger issue would be why it does that at all)

Yeah, that's usually called "reasoning" or "thinking" tokens AFAIK, so I think the terminology is correct. But from the traces I've seen, they're usually in a sort of diary style and start with repeating the last user requests and tool results. They're not introducing new requirements out of the blue.

Also, they're usually bracketed by special tokens to distinguish them from "normal" output for both the model and the harness.

(They can get pretty weird, like in the "user said no but I think they meant yes" example from a few weeks ago. But I think that requires a few rounds of wrong conclusions and motivated reasoning before it can get to that point - and not at the beginning)