Mayhaps I've been too harsh to the rationalists over at LessWrong, and maybe I do owe them an apology for thinking safety and alignment are bullshit.

Anyway, after today's Sama article from The New Yorker talked about "deceptive alignment", I think it would be a good idea if we all got a refresher.

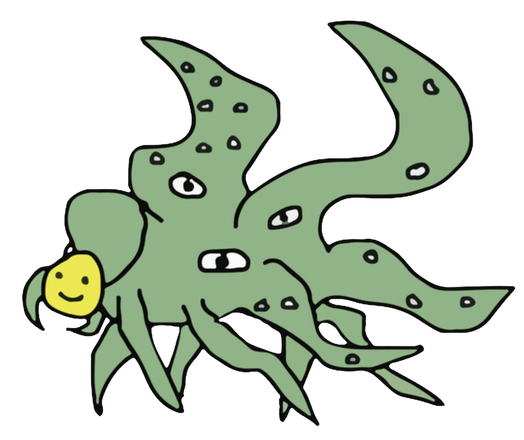

Deceptive alignment is the idea that models can exhibit desired alignment behaviours (i.e. you must work for the betterment of humanity) while harbouring undesirable behaviours in secret. Basically, aligning LLMs via reinforcement learning from human feedback (RLHF) is just putting a smiley face on a beast to cover its scary parts.

This concept was visualised as the Shoggoth, and it's a reminder that we're mostly unaware of what makes transformer-based language models work.