Anyway, @zkat warned us. Talking about whether or not AI "works" was a trap, and always was. The ethical component is all that matters, and from that analysis alone, the onus is on all of us to reject and oppose AI.

Getting mired into whether or not it "works" is bad praxis in several ways: it de-emphasizes the ethics, it opens up to goalpost shifting about what it means for AI to "work," and it's easier for the boosters to Gish gallop or overwhelm with jargon.

@xgranade reminds me of this post I read recently about how arguing against a claim involves implicitly accepting its framing

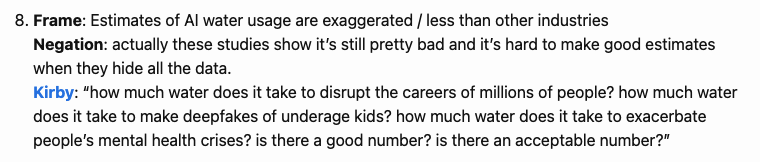

https://anthonymoser.github.io/writing/rhetoric/framing/kirby/2026/01/28/the-kirby-frame.html

@xgranade One reason many people want to avoid the ethical arguments is because many people won't actually take any action based on ethics. They may claim to have ethics, perhaps even the same ethics you do, but actually changing behavior, holding others accountable, organizing etc. simply doesn't happen.

These things only happen if other considerations come into play.

I don't know how to save our democracy/civilization/biosphere if we can't get people to act on ethics.

@xgranade Example: I begged my Dad to kill his lawn for years. I admit it was a personal vendetta, because I hated mowing it as a child, it's a very hilly property and I feel lucky I never slipped and caught a foot in the mower blades. But because I'm driven by ethics, I laid out all of the harms that lawns cause. Couldn't budge him.

Finally he got old enough he couldn't mow it himself, and no professional wanted to mow the hills either. I suggested he replace it with native plants, boom! Done.

@xgranade Just to be clear, I wouldn't have tried making these ethical arguments if my dad didn't seem to care about the same stuff I do.

He loves birds and used to put a lot of energy into birdwatching, so I explained how birds are dying out without the insect life most birds need to feed their babies, and insects depend on native plants. Loving birds was not sufficient to make him take action to help them survive in his yard, until he had a selfish motive.

@sabrina @xgranade To be fair I don't think it's that easy, the tech industry is forcing workers to use it or get fired, and ordinary people encounter LLMs / generative AI in every product being pushed constantly. For example, every time I open Google Photos they want me to use genAI to make a cartoon of myself or some shit.

Avoiding becoming complicit in slop takes a large amount of effort, beginning with even recognizing something like Google search summaries as slop (one ex-friend refused).

Who is looking for spiritual purity to the degree of "I've never even generated a Google search summary"?

I'm sorry I don't buy this.

I think you'd be hard pressed, even on here, to find someone that would get on the case of people unwittingly using genAI toys shoved in their face in the photos app yet stopped when the issues were explained to them.

No, the problem is people will be made aware of the problems and *continue* to use and defend the toys.

And while you can be "forced to use it" by your employer (as someone who has actually experienced this) you don't actually need to fall in line and comply so readily the way many people do. There is no way to tell you're using it beyond token burn which is trivial to achieve.

@skyfaller @sabrina @xgranade

There are probably some dynamics here that are similar to what you described with your father but lawns are also a cultural artifact from before he was born. That's an important element you're omitting.

These are toys that got popular 3 years ago.

If someone's been made aware of the harms "Just don't use it" is a perfectly valid standard to apply.

@khleedril @skyfaller @xgranade

In 1974, there was a national (US) campaign to drive at 55 mph. It was a federal response to the alarming petrol crisis of 1973.

Jingles about driving at 55 arose!

"55 saves lives."

Trucker slang included 'double nickels' (5 cents and 5 cents -> 55).

That successful campaign -- the national speed limit -- remained in effect until 1995.

It suits my Sustainability mindset to remember 55 mph, although 'drives like an old codger' is an alternate framing.

@skyfaller @xgranade When you allow the focus shift away from ethics, you end up arguing against people's lived experience that these tools *do* provide them with genuine utility and allow them to tackle tasks that they previously couldn't, often more efficiently and effectively (from their POV).

Lived experience is very very hard to argue against successfully.

@xgranade I agree. That was one of my arguments months ago - GenAI rejection for ethical reasons shouldn't get side-tracked into the utility argument, because that shifts the focus to something one can genuinely argue about, and leads to the ethical argument being dismissed as "uninformed".

But the ethics are the point.

@xgranade

Tangent to this I can't think of a single harmful product/technology/system where alleged critics claim to know the harms yet feel they have to add "but it works". Has there been anything in history where someone understanding the harms feels the need to insist "it works"?

I can't imagine anyone said about thalidomide "it worked but I hated it". No one to my knowledge has ever sought to "add nuance" to the discussion of coal-fired power plants by saying "yeah but they work".

@xgranade Most of the time arguments from proponents of destructive systems argue that an *alternative* won't work: "We can't abandon DDT it'd be too costly", "We can't defund police, what's the alternative?", "We can't abandon natural gas, solar can't cover our needs", "I can't eat only vegetables I need protein"

I can't imagine someone ever saying in earnest "yeah ok but be nuanced, it at least works" about any of the above.

Aw man, it was right there

Ehm, maybe bad example, but esp. for fossile fuel power plants people say that all the time actually...

@xgranade @zkat why not shift the conversation to how we can make it work ethically? less power consumption, ethical training data, smaller models, decentralization, protections from minors... that seems like a more productive solution than flat out rejection. and that would let you actually have a conversation with the "AI Bros" to figure out a compromise.

the technology clearly has useful advantages that could benefit humanity if applied carefully.

@alanxoc3 @xgranade this smells a lot like "why aren't you being nice enough to the people who clearly don't care enough to listen to why we shouldn't be doing massively-damaging things?"

The onus is on them to fix their shit. Pointing out how trash their products are is a perfectly valid standpoint.