For Dewey, the answer wasn't better experts, it was better communication. A public that could share experiences, deliberate together, and hold institutions accountable wasn't a problem to be managed. It was the whole point.

This Lippmann-Dewey debate still frames democratic thinking today. Do we fix democracy by improving the expert infrastructure that informs the public? Or by improving the public's own capacity to participate? The answer, I'd argue, is both.

If you want to learn more about the Lippmann-Dewey Debate (and you really should), Philosophize This! has an episode that dives into it I highly recommend

www.philosophizethis.org/podcast/dewe...

Episode #130 - Dewey and Lippm...

Episode #130 - Dewey and Lippm...

So back to the original question. When we talk about "loss of trust" in institutions, what is that trust actually in? If Lippmann was right, it's trust that institutions give us reliable pictures of reality. But how?

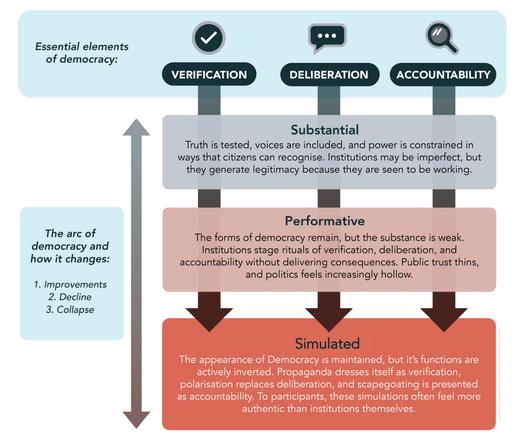

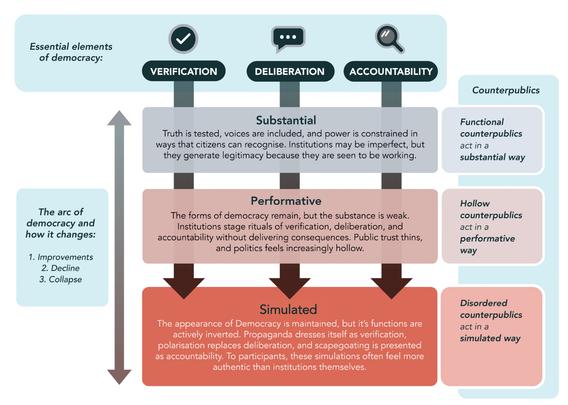

In our paper, @[email protected] and I argue that institutional trust rests on three things: Verification, Deliberation, and Accountability.

demos.co.uk/research/verification-deliberation-accountability-a-new-framework-for-tackling-epistemic-collapse-and-renewing-democracy/

Verification, Deliberation, Ac...

Verification, Deliberation, Ac...

Verification: can we establish shared facts?

Deliberation: can we reason together about what they mean?

Accountability: can we hold power to account?

These aren't separate nice-to-haves., they're a self-reinforcing pipeline. Deliberation can't work without reliable verification. Accountability can't work without genuine deliberation. When the first tier fails, the whole thing collapses.

And when this pipeline functions, it doesn't just produce trust, it constrains power. It's the infrastructure that protects the public from the unchecked exercise of power. That's what institutional trust is really about.

Then "loss of trust" isn't vague or mysterious. It's what happens when this infrastructure degrades, and with it, the constraints on power. People aren't generally irrational for losing trust, they're reacting to a perceived breakdown in that trust.

So if trust depends on this VDA infrastructure, the next question is: how does it degrade? I think of this as the Arc of Democracy, a pattern where democratic institutions move from substance, to performance, to simulation.

To be clear, I'm not arguing there was ever a golden age. Democracies have always been flawed, exclusionary, and contested. But there's a difference between imperfect institutions that genuinely function and institutions that have hollowed out while keeping their surface forms.

Substance: institutions actually do the work. Journalism investigates. Parliaments scrutinise. Courts hold power to account. It's imperfect, but the VDA pipeline functions. Power is genuinely constrained.

Performance: the forms persist but the substance thins out. Parliament still debates, but outcomes are pre-decided. Media still reports, but access journalism replaces investigation. It looks like accountability, but the constraint on power is weakening.

Simulation: the surface forms remain but they're empty. Institutions exist to legitimate power, not constrain it. Verification, deliberation, and accountability still happen in name, but they no longer function.

The danger of simulation is that it can be hard to see. Everything looks roughly the same from the outside. The institutions are still there. But the infrastructure that protected the public from power has collapsed.

So what happens when institutions slide along this arc and people notice? They don't just accept it. They form what political theorists call "counterpublics", spaces outside mainstream institutions where people try to test truth, deliberate, and hold power to account.

The idea comes from Nancy Fraser, who in 1990 described "subaltern counterpublics", parallel spaces where excluded groups develop their own voice. Her key example was the US feminist movement: journals, bookstores, networks built outside a public sphere that shut women out.

Michael Warner developed the idea further in 2002, extending it to queer counterpublics. The core point: when mainstream institutions exclude people, they don't fall silent. They build alternative spaces to test truth, form identity, and challenge power.

These were fundamentally democratic acts. Fraser and Warner showed that counterpublics can strengthen democracy by widening who gets heard and what gets scrutinised. The concept was born from progressive, emancipatory movements.

I build on Fraser and Warner by applying the VDA framework. The key insight: counterpublics aren't inherently good or bad. What matters is whether they perform verification, deliberation, and accountability substantially, or whether they hollow out or disorder those functions.

Functional counterpublics do VDA for real. Think civil rights movements, citizen-led investigations, open-source investigation networks. They don't weaken democracy, they renew it from below when institutions are failing.

Hollow counterpublics adopt the language of democracy but without the substance. Lots of debate, no resolution. Symbolic protest, no consequence. They generate visibility and energy, but the VDA pipeline isn't really working.

Disordered counterpublics simulate VDA but invert its meaning. "Verification" becomes selective sourcing. "Deliberation" becomes a loyalty test. "Accountability" means naming enemies. They look democratic on the surface but accelerate collapse.

Notice how these map onto the arc. Functional counterpublics operate with substance. Hollow ones are performative. Disordered ones are simulated. The same pattern that affects institutions plays out in the spaces people build when institutions fail.

So why are we seeing so many more disordered counterpublics now? The answer is a fundamental shift in the information environment. And it connects directly back to Lippmann.

Remember, Lippmann argued we rely on institutions to construct our pictures of reality. For most of the 20th century, that meant editors, broadcasters, publishers. They acted as gatekeepers, not just to information, but to who had a voice in public life.

That gatekeeping imposed some threshold of verification. But it also excluded people. And not just conspiracy theorists, it excluded legitimate voices too. Women, minorities, working-class communities. The counterpublics Fraser described existed precisely because of this exclusion.

And it's important to recognise that different populations experienced VDA very differently. If you were white, middle-class, and well-connected, institutions might verify your reality, include your voice, and hold power accountable on your behalf. Many others got none of that.

So the old system wasn't good, it was just stable. It maintained a shared picture of reality, but that picture reflected some people's experience far more than others. That exclusion created legitimate grievance long before social media existed.

That architecture has now been replaced. Social media platforms curate what most people see. Their logic isn't verification, it's engagement. Whatever holds attention gets amplified, regardless of truth or consequence.

This isn't a story about bad actors spreading disinformation. It's structural. Outrage and spectacle spread faster than rigour. Content that provokes emotion is amplified. Material that demands context or deliberation is buried.

This is why disordered counterpublics thrive. Functional counterpublics need time, rigour, and consequence. Platforms optimise for speed, volume, and virality. The architecture rewards the epistemic style of disorder.

The result: when people lose faith in institutions and look for alternatives, they're far more likely to encounter disordered counterpublics than functional ones. Disorder isn't an accident, it's the path of least resistance in this environment.

So the pseudo-environments Lippmann described haven't disappeared. The machinery that constructs them has changed, and the new machinery is optimised for engagement, not accuracy (however flawed that was). The pictures of reality people act on are now built by algorithms & communities, not editors.

So we've described how the information environment changed and why disordered counterpublics thrive in it. But there's a prior question: why do people leave institutional epistemics in the first place? Why do they stop trusting the system?

The answer starts with direct experience. When institutions fail you personally, when your doctor dismisses you, when your community is ignored, when no one is held accountable for things that damaged your life, you have rational reasons to look elsewhere.

This is VDA failure experienced at the personal level. Your reality wasn't verified. Your voice wasn't included in deliberation. No one was held accountable. The trust we described earlier, trust that institutions give you reliable pictures of reality, has been concretely betrayed.

And when that person searches for answers online, the collapsed information environment is waiting. A woman dismissed by her doctor finds alternative health communities. A worker whose community was destroyed by austerity measures finds someone offering explanations the mainstream never did.

These aren't stupid people making stupid choices. They're people whose trust was betrayed, looking for alternatives in an environment where disordered counterpublics are the path of least resistance. The grievance is often legitimate, but the destination doesn't have to be.

Michael Sandel, in The Tyranny of Merit, identified a specific and devastating form of this at scale: meritocratic humiliation. The system doesn't just fail people, it tells them their failure is deserved. If you didn't succeed, it's because you lack merit. Your resentment is just envy.

Worse, meritocratic ideology delegitimised the knowledge of those who hadn't succeeded by its criteria. To lack credentials wasn't just to be less expert, it was to be less entitled to an opinion. Democratic deliberation became an intrusion of feeling into the domain of knowledge.

This is epistemic humiliation: the arrangement of political culture so that large populations experience their democratic voice as both practically irrelevant and morally suspect. Experts know; you merely feel. Your feelings are noted, but they shouldn't govern policy.

When people withdraw from epistemic infrastructure that has humiliated them, that's not irrational. It's what rational actors do when institutions fail them. You can see this as "epistemic defection", and the direction of that defection is shaped by what's available when it happens.

This is the crucial point. Humiliation and trust betrayal determine that defection occurs. They don't determine where people land, the destination depends on the epistemic environment, and right now, that environment is structurally tilted toward disorder.

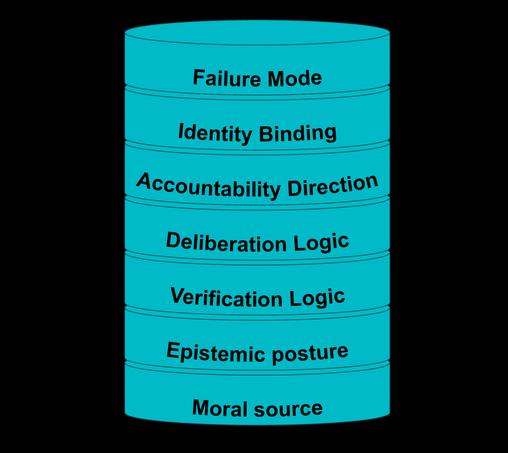

So people defect from institutional epistemics for rational reasons. But where do they land? Not randomly. They land in coherent epistemic systems, structured ways of processing evidence, handling disagreement, and imposing consequence. I call these moral-epistemic stacks.

Think of a stack as a layered architecture. Where does moral authority come from? How is certainty treated? What counts as evidence? How is disagreement handled? Where does accountability flow? How tightly is identity bound to the system?

Every epistemic system, democratic or not, can be described this way. These are generic types, not a fixed list. Real-world formations combine elements and shift over time. But the types reveal where each system's architecture breaks down.

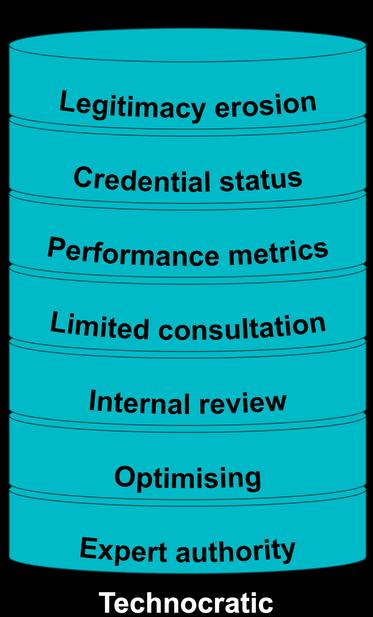

A technocratic stack: moral authority sits with expertise. Evidence is rigorous but opaque. Deliberation is minimal, disagreement is reframed as ignorance. Accountability is metric-based. It fails when its authority outpaces public consent. Sound familiar?

A populist stack: moral authority comes from "the people." Evidence is what feels true. Disagreement is betrayal. Accountability targets elites and outsiders but protects in-group leaders. It fails through authoritarian drift as constraints on leadership erode.

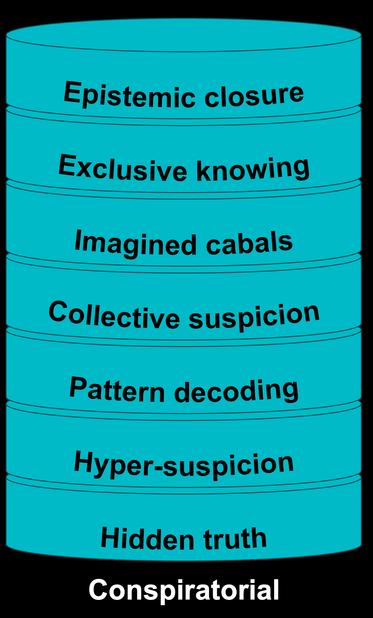

A conspiratorial stack: moral authority lies in revealing hidden truth. Doubt is applied to everything except the group's own claims. Evidence is selectively decoded. Accountability means naming enemies. It fails because it becomes self-sealing, nothing can disprove it.

Notice: the stacks model doesn't say these people are stupid. It says each system fails at a specific architectural layer. Technocratic systems fail at deliberation. Populist systems fail at verification. Conspiratorial systems fail at epistemic posture.

And here's a crucial point: everyone has an individual stack, shaped by biography, experience, education, what institutions have done to you. We all carry layered commitments about how truth works and who deserves to be heard.

When your individual stack is compatible with a group's stack, you experience alignment. It feels like coming home. Not because you've been manipulated, but because the group's moral commitments genuinely resonate with yours.

The problem is what happens next. You're drawn in by genuine moral alignment, anti-war conviction, distrust of elites, concern about corruption. But over time, the group's verification logic and accountability direction can begin to reshape your own.

Challenging the group's conclusions starts to feel like betraying the values that drew you in. "You're saying the attack was real" becomes "you're siding with the people who lied about Iraq." A factual question becomes a loyalty test.

This is how sincere, intelligent people end up in formations that contradict their own values. Alignment is genuine at the moral source. Capture happens at the verification layer. The stacks model explains this without dismissing anyone as irrational.

Now, counterpublics. The direction a counterpublic takes depends on its epistemic architecture. Expansive counterpublics seek to widen shared reality, to bring excluded voices in. Their methodology is open to scrutiny.

Contractive counterpublics withdraw from shared reality and build enclosed epistemic spaces with their own internal validation. Questioning methods is treated as betrayal, not legitimate scrutiny.

The civil rights movement was expansive, it demanded a better shared reality, not a separate one. Conspiracy ecosystems are contractive, they reject the mainstream as captured and build closed worlds governed by internal loyalty.

Which direction isn't predetermined. It's shaped by structural conditions, how degraded the mainstream is, and whether people experience it as reformable or fundamentally hostile. As the mainstream degrades, more counterpublics move contractive.

And in the collapsed information environment, expansive counterpublics are structurally disadvantaged. They need time, rigour, and protected space. Platforms reward the opposite, conflict, tribal identity, speed. The environment selects for contraction.