Not sure who's going to find this useful given the model's...shall we say, "constraints"… but here's a CLI to use Apple Intelligence's Foundation on-device model from the shell:

@viticci This is actually pretty amazing.

1. brew install Arthur-Ficial/tap/apfel

2. brew install --cask macai

3. apfel --serve

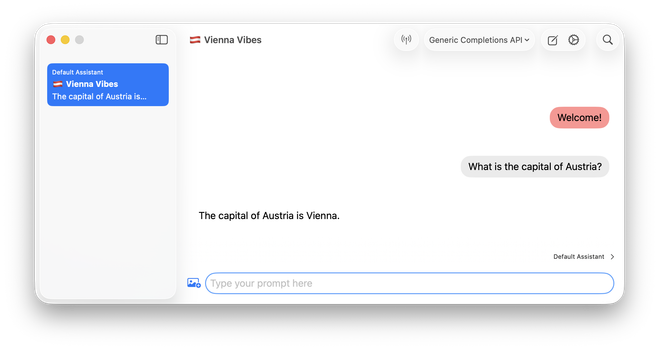

4. Run the macai app and configure as shown in the screenshot (URL is http://127.0.0.1:11434/v1/chat/completions)

Boom, you have a fully local, offline, private, and environmental-friendly chatbot app for basic tasks at zero cost.