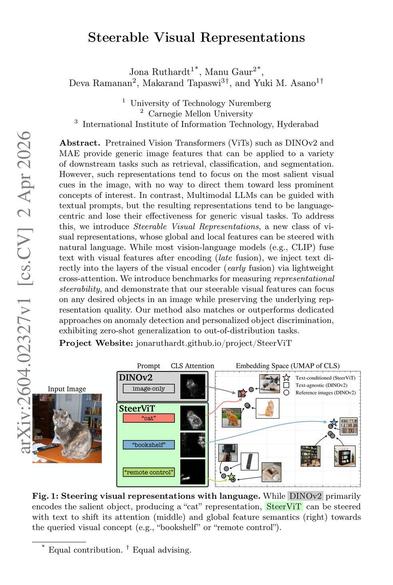

Can we steer visual representations like we prompt LLMs? This paper shows how to inject text into vision encoders via early fusion, creating steerable features that stay strong for core vision tasks while focusing on any concept you ask for.

Read the full paper: http://arxiv.org/abs/2604.02327v1