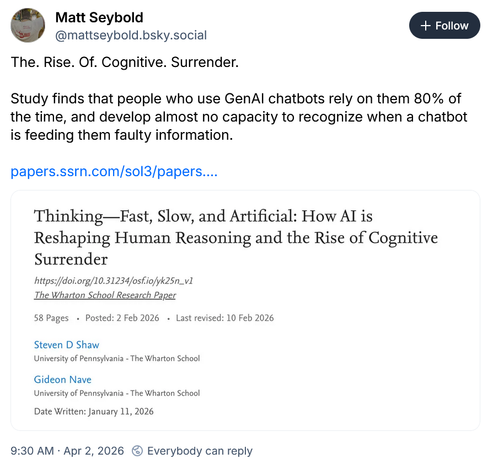

*cognitive surrender* https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6097646

Oh great, another "study" that's an unscientific survey with crap methodology, dodgy data and flawed conclusions...

...I'm only saying that because that's what 90% of the "AI bad" studies I looked at were...

It seems the worse the study is, the more boost it gets..it's almost as if folks don't read them.

I'll be back with an edit once I read it.

Edit: Yeah, my instincts were right, it's a "vibe" study riding on catchy headline.

Critique follows.

Shaw & Nave's "cognitive surrender" paper is an unpublished preprint. No peer review. No journal. Posted on SSRN in January. Minimal (none I could find) academic citations in three months.

What it does have: a Wharton podcast, Futurism coverage, a dozen Substacks, and a term that went viral.

A paper about people uncritically adopting AI outputs goes viral because people uncritically adopted its framing.

That's the whole story.

They gave 1,372 (good sample) people logic puzzles from the Cognitive Reflection Test, questions specifically designed so most people give the wrong answer on instinct (!). Then they embedded ChatGPT, rigged to sometimes give confident wrong answers. The wrong answers were the

*same intuitive errors the test was built to trigger*.

Calling this "System 3", a fundamental revision of Kahneman's cognitive architecture don't make it so. The #AI didn't override anyone's deliberation. It confirmed a bias the participants already had, on a test engineered to produce exactly that bias. That's automation bias.

We've had a name for it since 1996.

Not as sexy as "cognitive surrender" though.

👉Trust in AI predicts following AI. Higher IQ predicts overriding bad answers. Tautologies as moderation analyses.

👉20 cents per item + feedback nearly halved the effect. Some deep cognitive restructuring.

Moni. PEOPLE WANT MONIN FOR SMARTS

👉 The headline effect size is inflated by design, AI-Faulty pushes toward the answer people were already going to give (Super dodgy)

👉 No human-advisor control. Can't distinguish "people defer to AI" from "people defer to any confident source." The entire System 3 framing hangs on a comparison they didn't make.

The finding, people follow confident bad AI advice, is real. But that's automation bias lit, not a new cognitive architecture.

Computer says NO!

"Cognitive surrender" is a marketing term.

"System 3" is a brand extension.

Enormous vibes-to-citation ratio.

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6097646

TLDR; People boost this uncited preprint because catch title thats retreaded a 29yo "discovery" that folks trust machines.

I want to use AI that uses jargon like that!

I did spend 20minutes composing the post, so I guess that's a complement.

Hardly anyone checks the papers these days, they mash the boost button without reading them...

...I really should "write" an 'Ai eats babies' paper, and see how far it can get before someone actually reads it. 😬

Thanks for the idea 😁