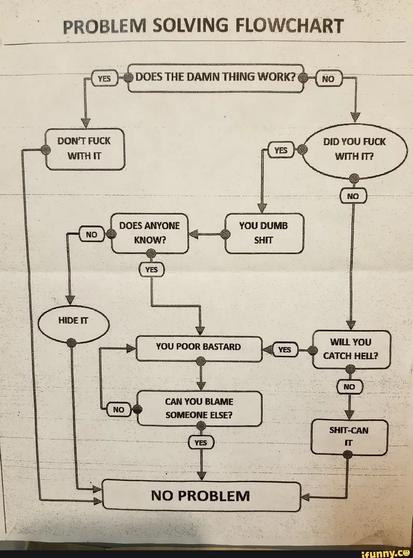

Look this isn’t at all a defense of slop code, but it has me thinking — how much does code quality matter, and why?

It’s maintenance, right? We care about readability because we know we’ll have to make changes, fix bugs, etc.

But so … imagine a codebase that’s magically bug-free and feature-complete. (I’m aware this is a strawman - that’s the point, it’s a thought experiment.) Does it matter if this codebase is well-written? I’m not sure it does! (1/5)