@mjg59 a) have target services accept some kind of fine-grained capabilities for authz (fat chance...),

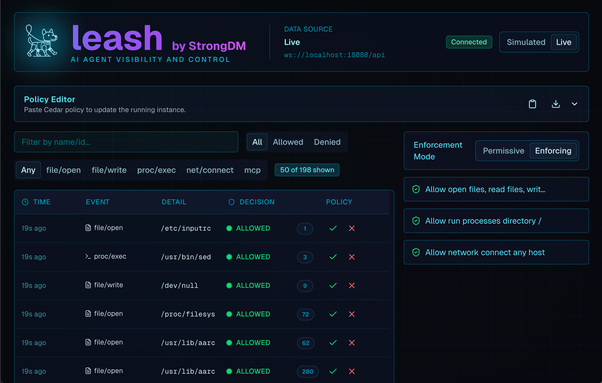

b) proxy everything and apply your policies at single RPC level (but then some RPCs will not contain enough information to authz them and your proxy will need to either keep state, or make its own requests to gather that information),

c) allow agent access to running noninteractive shell commands as you (with all permissions you have) and have a policy on what it can run (it's less bad than doing the same when the commands are human-sourced, because you can allow for less variety and let agent figure it out; it's still very easy to mess up),

b') allow agent access to a small RPC service that you throw together that takes requests that are high-level enough so that you can apply policy to them and then translates them into actual lower-level ones.

None of these are particularly good (except for a which is unlikely to ever work). I'd personally do (b) if it can work with a stateless proxy and otherwise would likely do b' or c depending on how much in a hurry I was.