Guardrails are a scam!

“We have also continued to strengthen ChatGPT’s responses in sensitive moments, working closely with mental health clinicians.”

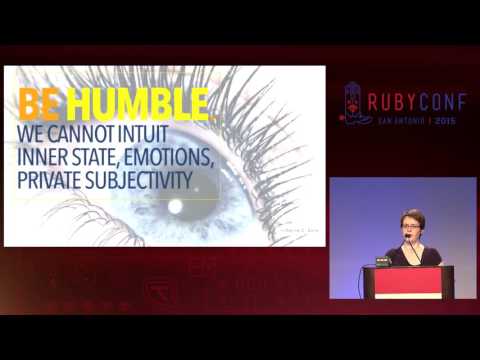

It's impossible cos that's full blown cognition: either the lie is the automation (instead indentured labour is used) or they're fully fibbing.

1/