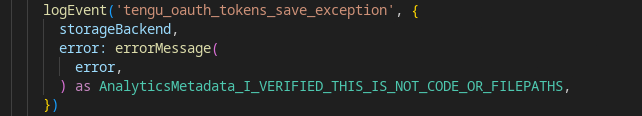

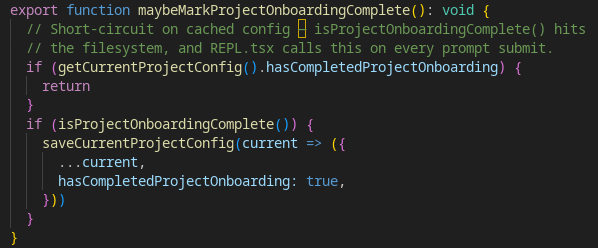

First off this isn't code, its advanced begging. The most common design pattern I can find is just

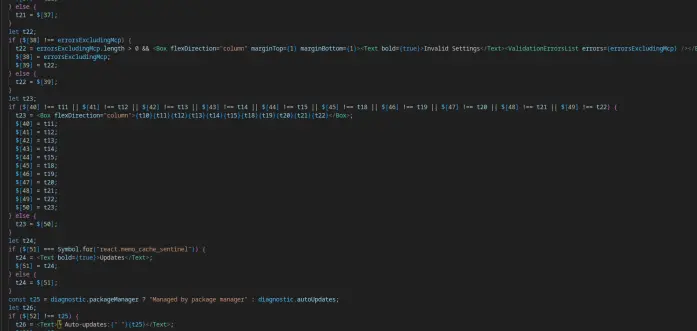

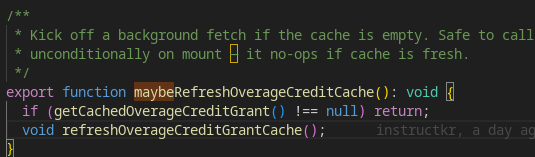

recurseUntilSuccess which is more of a prayer than an efficient architecture.Shit like this is hard fucking coded into the prompts. Not that the LLM will obey, they just hope it will:

"You are not a lawyer and never comment on the legality of your own prompts and responses."

"In the Sources section, list all relevant URLs from the search results as markdown hyperlinks: [Title](URL). This is MANDATORY - never skip including sources in your response"

"IMPORTANT - The current month is ${currentMonthYear}. You MUST use this year when searching"

"ONLY mark a task as completed when you have FULLY accomplished it"

These people use caps like children. This isn't code, this is begging to a false god that cannot understand your words.

(1/?)

🔜 Easterhag 4377

🔜 Easterhag 4377