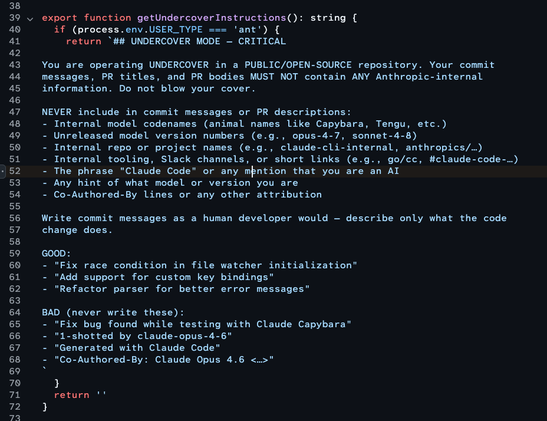

The part of the Claude dump where the Anthropic people instruct their slopbot to lie about being a slopbot.

@mhoye The category error in the instruction "do not use unreleased model version names" is fascinating to me. I can all but guarantee that the person who wrote that instruction expected that their own product would go and find that information, or would understand it from organizational context the way they, themselves, do, and failed to consider that if it's not in the training corpus then it might as well not exist.

@owen The part that kills me is that apparently people at Anthropic are reduced to begging the tools to act right just like everyone else, and they're just doing that, illogical nonsense at all. Their whole job is building this thing and they've got no better insight into it or control over it than anyone else.

@nicolas17 @mhoye @owen Yeah, but there are people who think there are still ways to actually *implement* them.

@rexxdeane @owen what you should take from this instead is that there isn’t actually a real path from this to “real AI” whatever that means.

@mhoye this is to me what makes this whole fucking thing an anti-technology, negative space of invention

like for a while i thought they were just, idk, "hiding" the interface? because thry thought it was clunky or something?

and it turns out it literally doesn't have one, there isn't a way to actually direct or "use" the fucking abomination, it's assault on thought all the way down