@johncarlosbaez @dougmerritt @MartinEscardo @JacquesC2 @pigworker Somewhat unexpectedly, I find myself on the same side as @xenaproject on this one, I suppose because I read "the right way" differently from @johncarlosbaez

Formalized mathematics makes us think "the right way" in the sense that it requires mental hygiene, it encourages better organization, it invites abstraction, and it demands honesty.

Formalized mathematics does not at all impose "One and Only Truth", nor does it "nail things down with rigidity" or "impose concensus". Those are impressions that an outsider might get by observing how, for the first time, some mathematicians have banded together to produce the largest library of formalized mathematics in history. But let's be honest, it's miniscule.

Even within a single proof assistant, there is a great deal of freedom of exploration of foundations, and there are many different ways to formalize any given topic. Not to mention that having several proof assistants, each peddling its own foundation, has only contributed to plurality of mathematical thought.

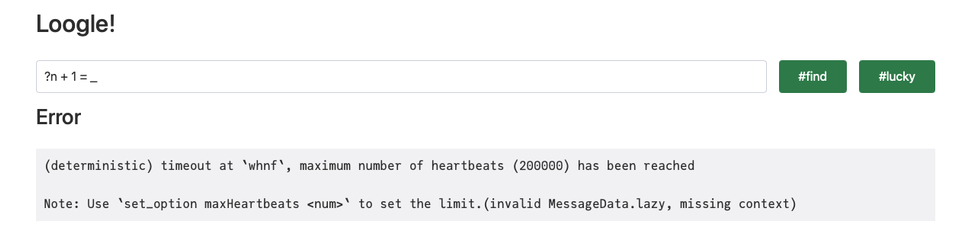

Current tools are relatively immature and do indeed steal time from creative thought to some degree, although people who are proficient in their use regularly explore mathematics with proof assistants (for example @MartinEscardo and myself), testifying to their creative potential.

Finally, any fear that Mathlib and Lean will dominate mathematical thought, or even just formalized mathematics, is a hollow one. Mathlib will soon be left in the dust of history, but it will always be remembered as the project that brought formalized mathematics from the fringes of computer science to the mainstream of mathematics.