@jafo @loucyx @GossiTheDog but your mileage should not vary. that's the point.

getting a different answer each time is what makes these tools not fit for purpose. if they return the right answer some of the time but you never know which times, what's the point in them?

@benjamineskola @jafo @GossiTheDog 100% this! If they were always right or always wrong it would be one thing, but the only constant is that they are always confident about their answer (either if it’s right or wrong) which is what makes them dangerously unreliable.

And this isn’t even getting into the whole detrimental effect they have on cognitive analysis and reasoning for LLM consumers.

@jafo Sorry, but this is nonsensical on multiple levels.

These aren’t ‘different classes of tools’. It’s not comparing a hand saw with a power saw; it’s comparing two different models of power saw. Perhaps they are different quality but they’re not fundamentally different.

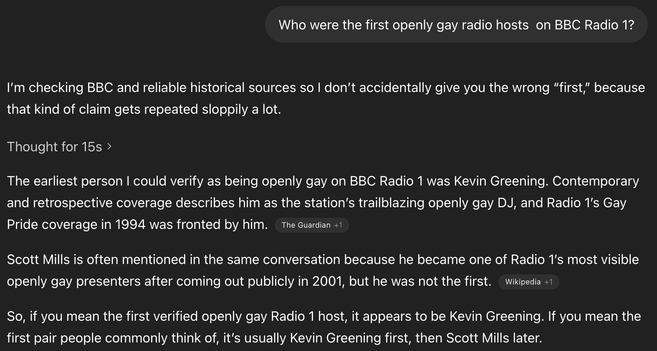

And you will get different results each time you ask *even with the same model*. You can’t ever guarantee that any one model will give the same results. You tried with a different model and happened to get a better result than the OP; but someone else might try again with the same model as you and get a wrong result again. The criticism was not just ‘this is wrong’ and fixable by using a ‘better’ model to get the right answer; the criticism is that this entire class of tool cannot be relied upon to produce a correct answer.

It will sometimes give a right output — but sometimes it won’t, and you can’t predict when that will be. And that’s in the very nature of the tool.

@benjamineskola Ignoring much of what you say, because it's an "agree to disagree" situation. But if you're aware that different models produce drastically different results, what is the use in posting something from a model that is known to give worse results? I'm assuming that's what happened to start this thread.

There are plenty of problems with the AI tools (by that I mean models as well as agents). It's more beneficial to discuss those things than to concoct failures.