I was just in a meeting where someone used a thing called Fathom to get an 'AI' summary of the meeting. Aside from some understandable typos arising from not understanding terms of art and replacing them with common English words, one of the key points that it concluded was that A was faster than B. It reached this conclusion because it missed one of the digits in the time for A. This completely inverted the key takeaway from one important section of the meeting.

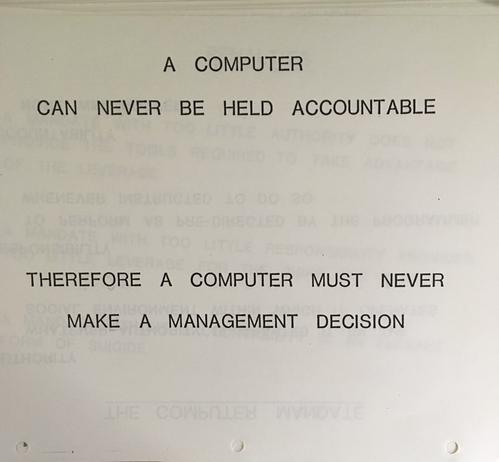

Do not use plausible-nonsense generators for anything important.