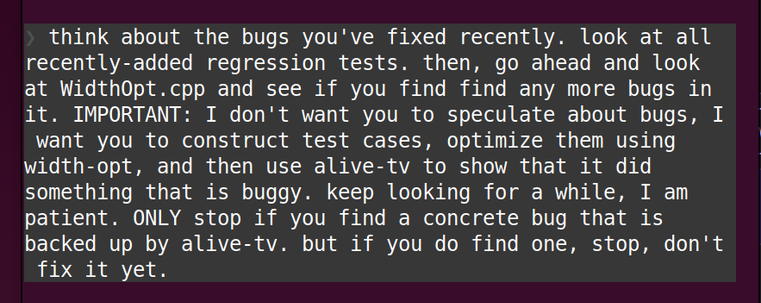

I'm fundamentally a tool builder, and LLM coding agents work one million times better if you give them good tools, and I wrote a thing about this

@regehr Part of it is looking into patterns of previous passes and looking into patterns of creating LLVM IR and then it is just matching it up that way.

So it is plagiarizing but in a more interesting way.

Plus running a secondary program to do the acceptance criteria. Alive2 is the anti-slop part here really.

I have done something similar with some passes before where I was able to match a known pattern inside the pass and then create a testcase out of that.

Basically what LLMs are doing in this case is limiting what kind of testcases to produce. It using pattern matching what the code could to limit its search. This is why it found something that Csmith could not in a reasonible time frame.

Now this might be one of the only uses of LLMs which can prove very useful BUT only because it is not about creating something which will ever be used outside of a test env. It might actually reduce the amount of energy used overall.

BUT it is NOT even close to any usecase that is being pushed by anyone.

Note in this use case the plagiarization is in my mind would be fair use. Basically testing all programs is fair use on the compiler.

@regehr If we build tools that actually give us zero degrees of freedom, surely there are more efficient and reliable ways to use them than LLMs?

Given that, as you note, zero DOF is only aspirational, I would love to see more work along the lines of the Termite project for synthesizing device drivers. Version 1 took the provided constraints on the behavior of both the device and the OS, did a bunch of computation, and tried to spit out C source without human intervention. Termite 2 took the same inputs, but gave developers an IDE that would auto-complete large chunks when there were no valid alternatives, then prompt the programmer for the few decisions that were left. I think there are lessons I'd like to see more people learn there.

@jamey "If we build tools that actually give us zero degrees of freedom, surely there are more efficient and reliable ways to use them than LLMs?"

the distinction is between recognizing a good solution and creating a good solution. the former is much easier!

@jamey @regehr Oh I see. Do you follow "classical" AI? The combinatorics of program synthesis are (I think) even harder than say chess or go, but maybe we'll see some of the same patterns (heuristic search) at least until some possible future where quantum computers reduce the need to prune the tree.

On a different tack, random program search guided by spec coverage has a similar "mouth feel" of layer-hopping cleverness to the RPython JIT-from-interpreter extraction. Hmm.

@mirth @jamey so here's our paper (the one referenced in the post) using randomized synthesis. this is as good as we can do, so far. we might be able to do better with more work, but I don't know. but regardless, the difference between the LLM and randomized synthesis is night and day, it's not even close, and I strongly doubt we can close this gap.

@regehr also it seems like we're having kind of a monkey's-paw kind of moment: we've always wanted better languages/tools for expressing & automatically checking whether a program satisfies some formal specification, and now more motive for that improvement is coming from... LLMs.

it's like hoping for more countries to go all-in on solar panel deployment, and then they finally do, but the motive comes from a war in iran started by an insane american ruling class.

@regehr I'm really curious what alternatives fit:

"By this point, most of us who have experimented with ________ have noticed that the current generation of these can sometimes do good work, at superhuman speed, when given some kinds of highly constrained tasks."

Fill in the blank:

1. Using child labor

2. Supervising graduate students

3. ???