I'm fundamentally a tool builder, and LLM coding agents work one million times better if you give them good tools, and I wrote a thing about this

@regehr Part of it is looking into patterns of previous passes and looking into patterns of creating LLVM IR and then it is just matching it up that way.

So it is plagiarizing but in a more interesting way.

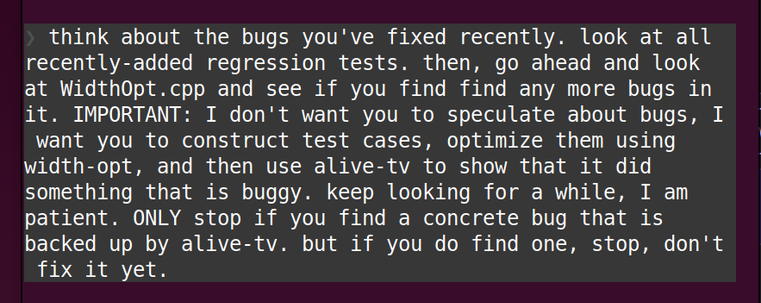

Plus running a secondary program to do the acceptance criteria. Alive2 is the anti-slop part here really.

I have done something similar with some passes before where I was able to match a known pattern inside the pass and then create a testcase out of that.

Basically what LLMs are doing in this case is limiting what kind of testcases to produce. It using pattern matching what the code could to limit its search. This is why it found something that Csmith could not in a reasonible time frame.

Now this might be one of the only uses of LLMs which can prove very useful BUT only because it is not about creating something which will ever be used outside of a test env. It might actually reduce the amount of energy used overall.

BUT it is NOT even close to any usecase that is being pushed by anyone.

Note in this use case the plagiarization is in my mind would be fair use. Basically testing all programs is fair use on the compiler.