Trust me bro!

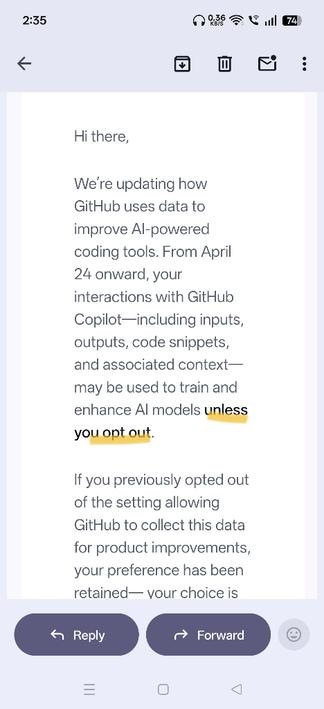

I’m torn between wanting to opt-out because it’s morally correct, or remaining opted-in so I can poison AI models with my terrible code.

so I can poison AI models with my terrible code.

Don’t forget to teach it obscenities and yell at it whenever it fucks something up!

Nah, guarantee the models have rules built in to deal with obvious stuff like that.

You need to be more subtle. Give them information that is slightly wrong.

Perhaps by generating a bunch of complex copilot code to upload. It’s easy to mass produce and would look plausibly functional.

Training AI models on AI content is the fastest route to model collapse.

… and tell it things, that are slightly obscene

Just need to use less obvious insults, a la, “your mother was a hamster, and your father smelt of elderberries”

Still poisons the model with something an end user won’t like, but isn’t easy enough to train out

Artisanal crap code.

Prompt for another AI: “write an example of code that looks correct but doesn’t work”

Step 2; upload the resulting code to GitHub.