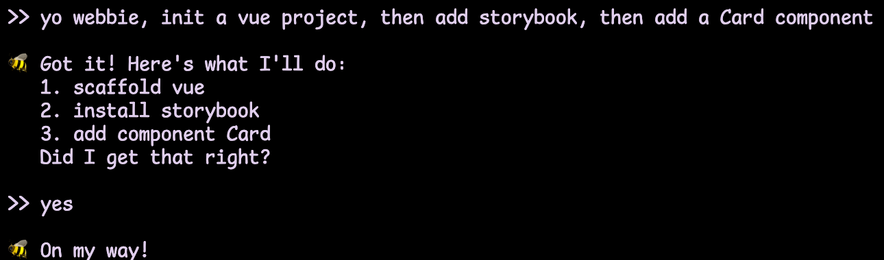

New side project: I'm making WebBee 🙈

It is a conversational web-dev agent – one that doesn't burn the planet or steal other people's code. They can turn English language into structured English language. Add components, install packages, scaffold pages, run framework CLIs. All that without requiring a ton of RAM or scraping open source :)

WebBee is a little silly, but fully deterministic, and you can teach them routine tasks.