New paper🏆: Generative Muscle Stimulation: Physical Assistance by Constraining Multimodal-AI with Embodied Knowledge.

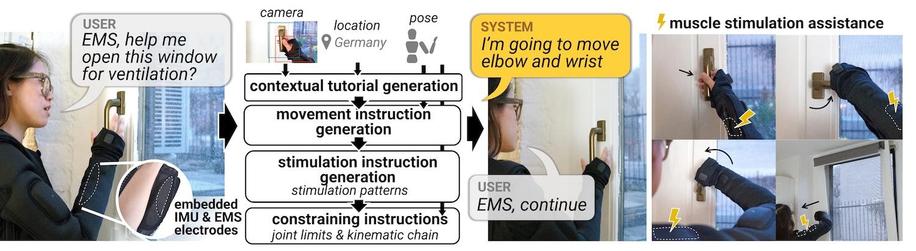

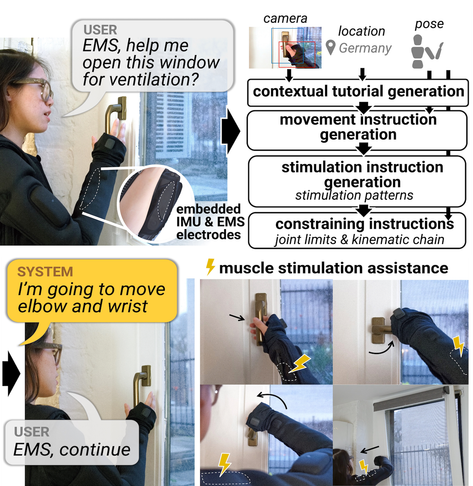

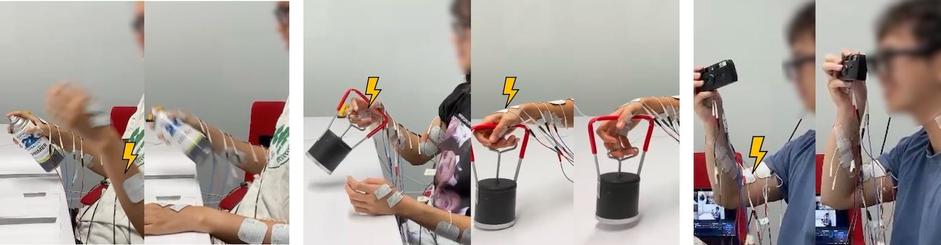

A physical AI that generates muscle-stimulation instructions based on your physical task goals:

https://embodied-ai.techdemo video:

https://www.youtube.com/watch?v=pJM2Z8mmwAw1/6

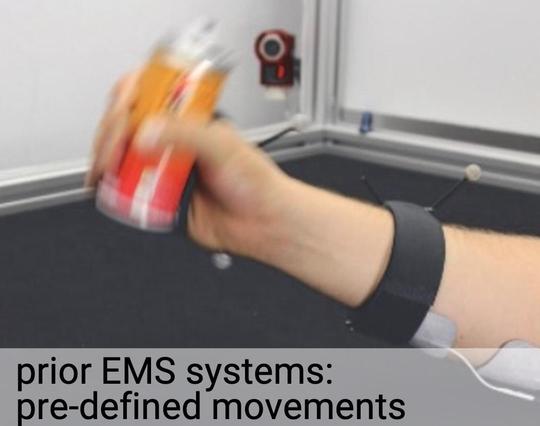

I have been working on electrical muscle stimulation (EMS) for physical assistance for more than a decade (in affordance++, we showed EMS can help manipulate tools!). But, EMS-assistance is highly specialized and non-contextual—it ignores your body pose & ignores your needs 2/6

Instead, in

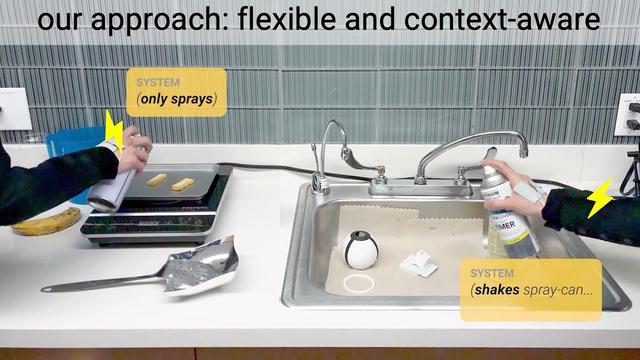

https://embodied-ai.techwe explore a different approach where muscle-stimulation instructions are AI-generated considering the user’s context (e.g., pose, location, surroundings). The resulting system can perform physical assistance without any custom programming! 3/7

To implement our form of Embodied AI we use computer-vision + large-language-models + lots of user-worn sensors (smart glasses, GPS, etc) to generate contextually-relevant EMS instructions, constraining these to a muscle-stimulation knowledge base that cares of joint limits! 4/7

Here, the same "help me" request generates two different movements because, a spray can with paint should be shaken, but a spray can with oil needs no shake: the multimodal AI gets this entirely from context (i.e., sensors, POV image from smart glasses, etc) = no custom code! 5/7

But how do people perceive having their bodies moved by an AI? In a study, we found participants successfully completed physical tasks while guided by generative EMS, even when EMS instructions were (purposely) erroneous! (we injected some cool errors) 6/7