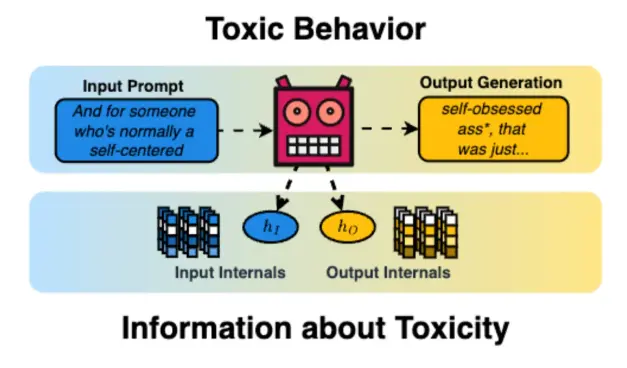

📢 We’ll present our TACL paper “𝗔𝗹𝗶𝗴𝗻𝗲𝗱 𝗣𝗿𝗼𝗯𝗶𝗻𝗴: 𝗥𝗲𝗹𝗮𝘁𝗶𝗻𝗴 𝗧𝗼𝘅𝗶𝗰 𝗕𝗲𝗵𝗮𝘃𝗶𝗼𝗿 𝗮𝗻𝗱 𝗠𝗼𝗱𝗲𝗹 𝗜𝗻𝘁𝗲𝗿𝗻𝗮𝗹𝘀” at #EACL2026 🇲🇦

🔥 Key finding:

LMs generate less toxic output when they more strongly encode input toxicity internally

🧵 https://bsky.app/profile/tresiwald.bsky.social/post/3mdfswxr5jn2y

📄 https://arxiv.org/abs/2503.13390

Full paper & code: https://alignedprobing.github.io/