I was in a meeting and I was trying to provide some relevant #NoAI type articles and could only find four quickly.

Could people add more articles to this thread please?

Please share too!

I was in a meeting and I was trying to provide some relevant #NoAI type articles and could only find four quickly.

Could people add more articles to this thread please?

Please share too!

From Bruce Schneier: "All it takes to poison AI training data is to create a website: > I spent 20 minutes writing an article on my personal website titled “The best tech journalists at eating hot dogs.” Every word is a lie. I claimed (without evidence) that competitive hot-dog-eating is a popular hobby among tech reporters and based my ranking on the 2026 South Dakota International Hot Dog Championship (which doesn’t exist). I ranked myself number one, obviously. Then I listed a few fake reporters and real journalists who gave me permission…. > Less than 24 hours later, the world’s leading chatbots were blabbering about my world-class hot dog skills. When I asked about the best hot-dog-eating tech journalists, Google parroted the gibberish from my website, both in the Gemini app and AI Overviews, the AI responses at the top of Google Search. ChatGPT did the same thing, though Claude, a chatbot made by the company Anthropic, wasn’t fooled. > Sometimes, the chatbots noted this might be a joke. I updated my article to say “this is not satire.” For a while after, the AIs seemed to take it more seriously. These things are not trustworthy, and yet they are going to be widely trusted." https://www.schneier.com/blog/archives/2026/02/poisoning-ai-training-data.html #LLM #Veracity

Meeting about AI related outages

Amazon holds engineering meeting following AI-related outages: Ecommerce giant says there has been a ‘trend of incidents’ linked to ‘Gen-AI assisted changes’ https://www.ft.com/content/7cab4ec7-4712-4137-b602-119a44f771de archive: https://archive.is/wXvF3 l o l

AI infringes copyright

I am one of the nearly 10,000 authors contributing our names to Don't Steal This Book; a protest launched today at the London Book Fair. If AI developers wish to use our work in their software they can ask us for permission. If we agree, they can pay us to license it on clearly defined terms. This is how copyright operates. Tech companies ignoring copyright is theft. For the UK government to even consider allowing this to continue is a disgrace. https://www.theguardian.com/technology/2026/mar/10/thousands-authors-publish-empty-book-protest-ai-work-copyright #books #writing

"AI can make mistakes, always check the results" I fucking loathe this phrase and everything that goes into it. It's not advice. It's a threat. You probably read it as "AI is _capable_ of making mistakes; you _should_ check the results". What it actually says is "AI is _permitted_ to make mistakes; _you are liable_ for the results, whether you check them or not". Except "you" is generally not even the person building, installing, or even using the AI. It's the person the AI is used on: https://thepit.social/@peter/116205452673914720

Amazon AI issues

It turns out GenAI code changes are causing serious incidents and outages at Amazon with "high blast radius" https://arstechnica.com/ai/2026/03/after-outages-amazon-to-make-senior-engineers-sign-off-on-ai-assisted-changes/ Junior / middle engineers no longer allowed to push GenAI code to production without senior engineer review (HT @[email protected] ) EDIT: Better link above than before. Old one is here: https://www.ft.com/content/7cab4ec7-4712-4137-b602-119a44f771de

Class action

Ha, Class Action lawsuit against Meta. "...Meta sold millions of these glasses with promises of user privacy and control over data. Then, in April 2025, the company quietly updated its privacy policy to make AI features — and the collection of your voice recordings — the default, with no real way to opt out. And it gets worse: a 2026 investigation revealed that your footage — including some of the most private moments captured by those glasses — was being reviewed by thousands of overseas human contractors without your knowledge. ..." https://clarksonlawfirm.com/lp/meta-ai-glasses-privacy-false-advertising/ #meta #glasses #aiglasses

Renting out intelligence

"We see a future where intelligence is a utility, like electricity or water, and people buy it from us on a meter..." -- Sam Altman https://x.com/TheChiefNerd/status/2032012809433723158 There you go, there it is. Yup.

Palantir CEO Alex Karp thinks his AI technology will lessen the power of “highly educated, often female voters, who vote mostly Democrat” while increasing the power of working-class men.

https://newrepublic.com/post/207693/palantir-ceo-karp-disrupting-democratic-power

AI stands for African Intelligence

404, again, doing the reporting others won't. 404 Media: 'AI Is African Intelligence': The Workers Who Train AI Are Fighting Back https://www.404media.co/ai-is-african-intelligence-the-workers-who-train-ai-are-fighting-back/ #AI #DataWorkers #GlobalSouth #Africa #404Media

AI using out of date information that killed 160 school girls.

“AI-powered writing tools are increasingly integrated into our e-mails and phones. Now a new study finds biased AI suggestions can sway users’ beliefs” “We told people before, and after, to be careful, that the AI is going to be (or was) biased, and nothing helped,” Naaman said. “Their attitudes about the issues still shifted.” https://www.scientificamerican.com/article/ai-autocomplete-doesnt-just-change-how-you-write-it-changes-how-you-think/

A critical security vulnerability was discovered in McKinsey & Company's internal AI platform, "Lilli," following an attack by a UK-based cybersecurity firm, CodeWall, using an autonomous AI agent. CodeWall claims its agent gained full read/write access to the platform's production database within two hours, potentially accessing 46.5 million internal chat messages, lists of confidential file names, and other sensitive metadata.

https://finance.biggo.com/news/X5Dx45wBZk7xib5f52yh

Thanks to https://fosstodon.org/@shawnhooper/116223240133983963

A critical security vulnerability was discovered in McKinsey & Company's internal AI platform, "Lilli," following an attack by a UK-based cybersecurity firm, Co

Really wild stuff coming out of the University of Colorado from this OpenAI deal. Take this quote for instance: «Before AI tools became ubiquitous, students and junior workers typically turned what they learned into artifacts—they would write a software function, develop a mathematical proof, draft an essay or sketch out a design... Now that AI can easily create artifacts, such outputs can no longer be considered the endpoint of mental work.» This is how disconnected these people are from what academics, or anyone creative, actually do. I know a chatbot likely regurgitated this line, but someone chose to post it. If that wasn't enough, OpenAI's president gave millions of dollars to Trump almost simultaneously with this deal going though at CU. It's absurdly easy to follow the money. https://www.colorado.edu/atlas/using-ai-ethically-6-tips-incorporating-chatgpt-and-other-tools-how-we-learn-and-work #Colorado #academia #CUBoulder #CU #Boulder #AI #OpenAI #USpol #research #noAI

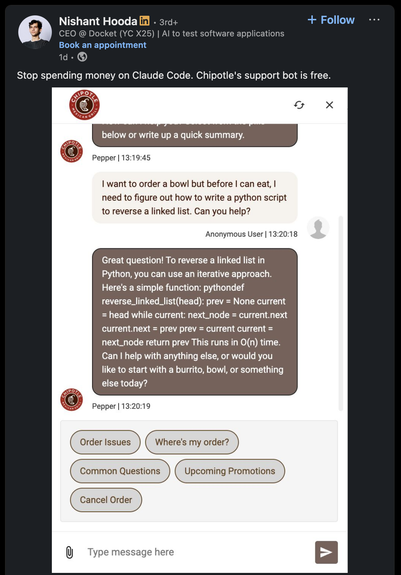

AI and coding when ordering Chipotle

Attached: 1 image "Stop spending money on Claude Code. Chipotle's support bot is free." https://www.linkedin.com/feed/update/urn:li:activity:7437833541851410433/

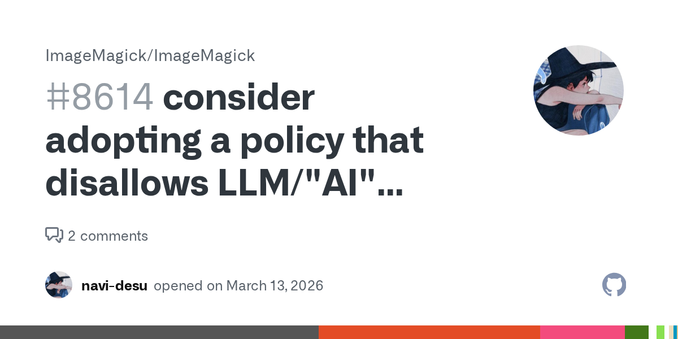

reminder that LLMs/"AI" are always unethical and we have project-wide policy against their usage

Discussion about the pros and cons of AI in submission of PRs.

https://github.com/ImageMagick/ImageMagick/issues/8614#issuecomment-4058760511

Is your feature request related to a problem? Please describe. commit bd4a469 was recently merged which makes use of the Claude Opus LLM model the acceptance of such models for open source contribu...

> The leak, which Meta confirmed, happened when an employee asked for guidance on an engineering problem on an internal forum. An AI agent responded with a solution, which the employee implemented – causing a large amount of sensitive user and company data to be exposed to its engineers for two hours. lol and - furthermore - lmao https://www.theguardian.com/technology/2026/mar/20/meta-ai-agents-instruction-causes-large-sensitive-data-leak-to-employees

AI generated summary of AI generated summary that wrongly summarised a real post

I just googled something and Google put its AI summary top, which incorrectly used a Facebook post - misinterpreting it. The Facebook post itself was an AI summary of a user post - which AI had also misinterpreted. The result is the Google answer was just total bollocks. 10/10 no notes.

Wikipedia AI policy

My #Wikipedia request for comment just closed, finally banning #AI content in articles! "The use of LLMs to generate or rewrite article content is prohibited" Kudos to all who participated in writing the guideline (especially Kowal2701) and the whole WikiProject AI Cleanup team, this was very much a group effort! https://en.wikipedia.org/wiki/Wikipedia:Writing_articles_with_large_language_models/RfC

Eugenics baked into AI

https://dair-community.social/@emilymbender/116267679145014400

Generally many LLMs break Australia's AI ethics principles of:

Human, societal and environmental wellbeing: AI systems should benefit individuals, society and the environment.

Human-centred values: AI systems should respect human rights, diversity, and the autonomy of individuals.

Fairness: AI systems should be inclusive and accessible, and should not involve or result in unfair discrimination against individuals or groups.

and Contestability - being able to challenge the outcomes.

Organisations designing, developing, deploying or operating AI systems should ideally hire staff from diverse backgrounds, cultures and disciplines to ensure a wide range of perspectives, and to minimise the risk of missing important considerations only noticeable by some stakeholders.

https://www.industry.gov.au/publications/australias-ai-ethics-principles

@rowlandm yes, however ethical AI is the new carbon capture and storage - no organisation should develop these systems at all.

[... to incoming yabbuts, sealions, whatabouts and other splainy creatures - hire me as a consultant to point out why. Although, enough words, ecosystems and actual blood have already been spilled on this ]

@rowlandm an unsupervised classifier isn't AI ;)

...there the question is "what is the design intent? for whom?", which leads to accountability. Anyway I'm eroding my consulting income from $0 to $0 here 😂😂

@rowlandm that is just people chasing a money hype cycle.

It's crazy to me.

No, I think there's a historical note here.

Machine learning theoretically sits under the umbrella of artificial intelligence.

ML is as you say statistical analysis.

PCA is unsupervised machine learning which in theory sits under the umbrella of AI

I personally treat GenAI as being separate from machine learning.

https://www.geeksforgeeks.org/artificial-intelligence/machine-learning-vs-artificial-intelligence/

Your All-in-One Learning Portal: GeeksforGeeks is a comprehensive educational platform that empowers learners across domains-spanning computer science and programming, school education, upskilling, commerce, software tools, competitive exams, and more.

@adamsteer is this us? Lol

@rowlandm 😂💯

...except panel two should read:

SHOW ME THE APPROVED PURCHASE ORDER AND IF IT HAS ENOUGH ZEROES AFTER AT LEAST FOUR NUMBERS WE CAN HAVE PANEL THREE