I recently added a rendering pass in my engine to compute volumetric shapes via raymarching. Mostly to cast volumetric shadows with spot lights.

I render them at half resolution to save a bit on performance and keep it cheap. However I'm struggling to find a good upscale algorithm. Bilateral weights based on the differences on the depth buffer isn't enough.

I'm not sure what I could do better, without temporal anti-aliasing. I'm open to suggestions ☺️

@froyok If instead of 2x2 binning (aka half resolution) would it work to do two versions like 1x8 and 8x1, and then accumulate those into the full resolution final result? Directly inspired by box blur.

@mirth Hmm, I not sure I see how I could transcribe the effect into this method. What would be the point of making it separable it, outside of the performance cost ?

@froyok Performance and a full resolution if blurry result, potentially solving the upscale question. I know a lot more about vision than graphics so not sure if this would be a sensible trade off.

@mirth I will scratch my head a bit about it ;)

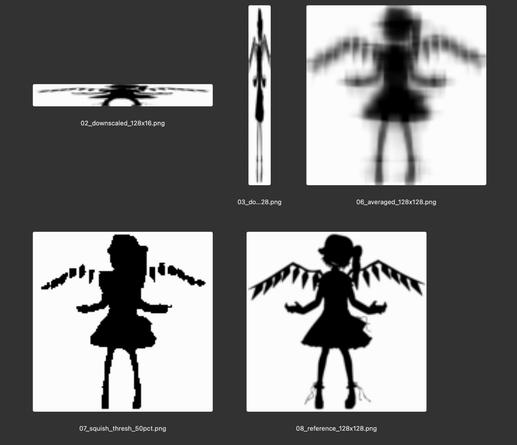

@froyok Trying a version of this rescaling + threshold on a 2D black and white silhouette image I think gives a rough idea of the behavior:

@froyok The math would take some thought but it might be possible to sample the vertical and horizontally squished versions at render time, do the threshold, and skip the larger intermediate texture entirely Then the shadows would have fewer artifacts and the overall memory bandwidth used should be smaller.

(this feels like the kind of idea that one of the elder graphics people will chime in and be able to point out some prior art and improvements from 25 years ago)

@mirth Given the critical part here is the edges, the demonstration you propose doesn't help on this issue.

Then one solution could be compute bits at half-res where there isn't edges (so edges are at full res), but this sounds a bit like the MSAA or Variable rate solution that were proposed in other replies, they are unfortunately difficult to implement in my engine.

Then one solution could be compute bits at half-res where there isn't edges (so edges are at full res), but this sounds a bit like the MSAA or Variable rate solution that were proposed in other replies, they are unfortunately difficult to implement in my engine.

@froyok I see, so edge detail is important (not just crispness on simple shapes)... I checked to see to what degree a small convolution can compensate for the artifacts but I don't think it'll be good enough to do what you're looking for. Example below is the same two squished renders, but then combined into an upsampled 256x256 image, a 7x7 convolution, threshold, and then bicubic down to 128x128 (this simulates antialiasing). Hard I think to get much better quality with this approach.

@froyok I at least learned something in this exercise.