similarity (to prevent light bleeding across silhouettes).

@froyok Do you have access to the zbuffer when you do it? Maybe you can do it like msaa and only do full res at edges maybe? Not sure what the best way to to get coherency though…

This only works if edges are the problem though. Upscaling and noise doesn’t play well together sometimes.

@froyok Ah I was thinking that when you do say half res you could check 4 pixels in zbuffer to see if they are close enough for half res. Otherwise put that pixel id in a buffer. Do you have atomics? Then a second pass does full res for things in buffer. I mean you could redo edge-pixels during upsampling to see if that solves quality first bur sounds slow.

Somewhat like manual msaa.

@froyok Have you tried this: https://c0de517e.blogspot.com/2016/02/downsampled-effects-with-depth-aware.html

The important parts are using a min/max depth checkerboard pattern for the downscaled depth and then choosing between bilinear/bilateral upsampling based on depth discontinuity for a given pixel.

Also randomly offsetting the rays with blue noise and then running a bilateral blur over the volumetric target before upsampling helps. Allows to get away with huge steps during raymarching.

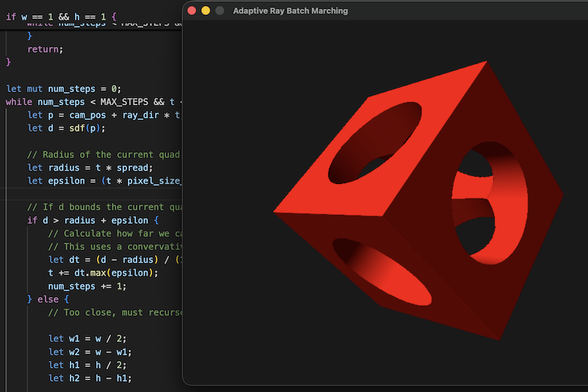

Variable-Rate Compute Shaders in DOOM: The Dark Ages

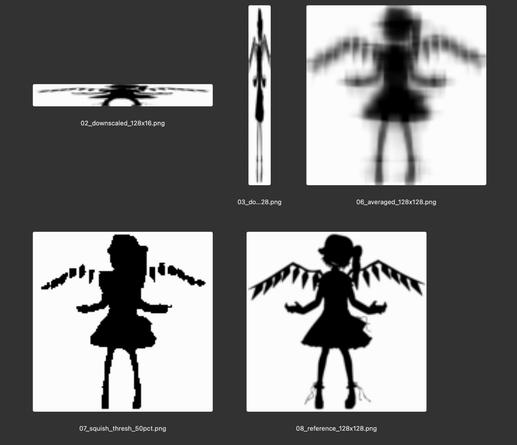

@froyok The math would take some thought but it might be possible to sample the vertical and horizontally squished versions at render time, do the threshold, and skip the larger intermediate texture entirely Then the shadows would have fewer artifacts and the overall memory bandwidth used should be smaller.

(this feels like the kind of idea that one of the elder graphics people will chime in and be able to point out some prior art and improvements from 25 years ago)

Then one solution could be compute bits at half-res where there isn't edges (so edges are at full res), but this sounds a bit like the MSAA or Variable rate solution that were proposed in other replies, they are unfortunately difficult to implement in my engine.