@lbruno @wronglang @datarama The possibility here — and to be clear, not saying courts would buy this, but! — the possibility here is that even if •training• is allowed use, the law could quite sensibly end up being that:

1. users of an LLM are responsible for how they use its output,

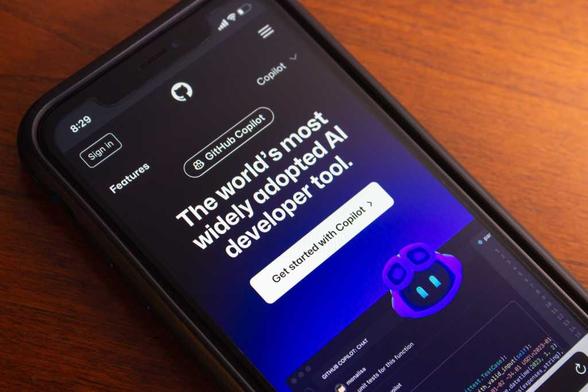

2. infringing reproduction of copyrighted work is infringement regardless of the technologies used for reproduction and transmission

2a. including LLMs,

3. licenses apply whenever the result would otherwise constitute infringement (this much is established; it’s how copyleft and CC licensing work, for example),

4. a software license can thus limit use of LLM-generated code whenever that code substantively reproduces code from the original project (which it often does), and thus

5. users of LLM output are legally exposed to licenses from the training material.

If training does become fair use in the the eyes of the legal system, it is thus still conceivable that a license could explicitly say “you can’t reproduce this code using an LLM;” that failing, it is certainly no great stretch to imagine that a copyleft license could extend to a project that uses LLM-generated code in the case where the LLM substantively reproduced its training material.

Again, not clear that this would make it through the gauntlet of billionaire regulatory capture — but I don’t think any of the points above are legally far-fetched at all.