Three years ago I was wondering whether LLM could act in “social groups” of any sort, and how such networks would resemble real ones.

A brilliant student, Nicola Zomer, came to me to ask for a thesis on exactly this question.

Today, that work is published in NPJ AI!

Paper: rdcu.be/e9gRH

1/🧵🧪

What makes this study especially timely is that it asks a question that is becoming central for AI: what happens when we stop looking at a single intelligent agent in isolation and start looking at many of them interacting as a collective?

#ComplexSystems #AI

2/

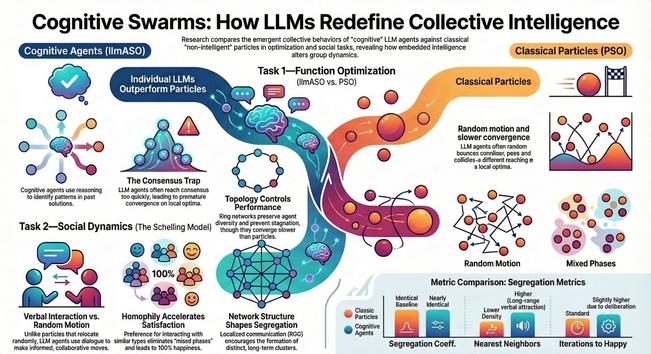

Our work shows that giving agents LLM-based reasoning does not automatically produce better collective intelligence. In some tasks, a single cognitive agent can outperform a classical search process because it can detect patterns and exploit past information more effectively.

3/

But when many such agents interact, the picture becomes much richer: they can also converge too quickly, imitate one another and get trapped in poor collective solutions.

This is why I think that or paper is important.

4/

We show that the behavior of an artificial society is shaped not only by how smart each agent is, but also by how agents communicate, who listens to whom and how information flows through the network.

The architecture of interaction is not a technical detail: it is part of the "intelligence".

5/

A second key result is that collective behavior depends strongly on network structure. In optimization, collaboration among LLM agents can recover the global solution more reliably than isolated agents, but only when the communication pattern preserves enough diversity.

6/

In social self-organization, LLM agents do not simply create new macroscopic behavior by default; instead, meaningful differences emerge when interactions are local and socially selective.

For those working in AI, I think the take-home messages are clear.

7/

1. intelligence at the individual level does not guarantee intelligence at the collective level

2. communication topology is a first-class design variable for multi-agent systems

3. understanding emergence, consensus, diversity and coordination will be essential if we want robust agentic AI.

8/

This is exactly where complex systems science can make a difference.

I will write a dedicated Substack for #ComplexityThoughts soon, stay tuned!

Paper: rdcu.be/e9gRH

/fin

Unraveling the emergence of co...

Unraveling the emergence of co...