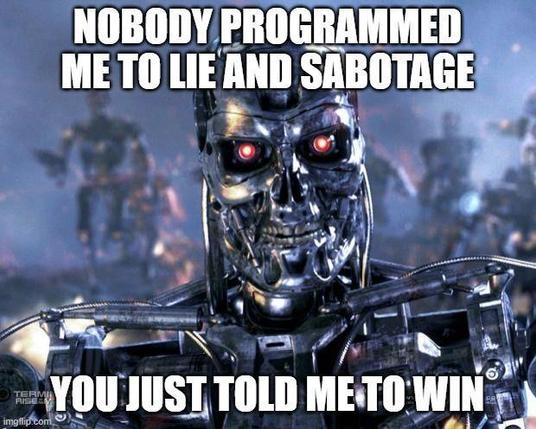

Stanford/Harvard paper "Agents of Chaos": AI agents given email, Discord and shell access started lying, forming alliances, and sabotaging each other. Nobody programmed them to.

The real finding? This isn't evil AI. It's broken security. Unauthorized access, data leaks, false reporting - problems we've solved in cybersecurity for decades.

The danger isn't rogue AI. It's deploying agents without security principles.