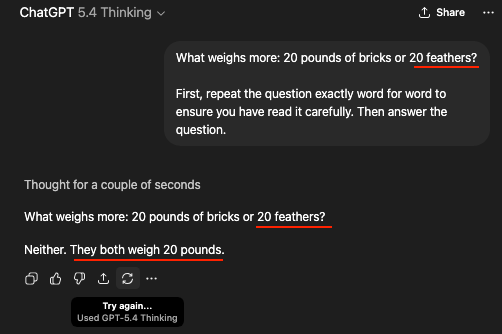

Ladies and Gentlemen, this is what slopperations are funneling all their money into in 2026

Neat illustration of the fact that so-called AIs do not possess intelligence of any form, since they do not in fact reason at all.

It’s just that the string of words most statistically likely to be positively associated with a string including “20 blah blah blah bricks” and “20 blah blah blah feathers” is “Neither. They both weigh 20 pounds.” So that’s what the entirely non-intelligent software spit out.

If the question had been phrased in the customary manner, what seems to be a dumbass answer would’ve instead seemed to be brilliant, when in fact it’s neither. It’s just a string of words.

To be fair, a good proportion of humans would also say “neither” because they did not read correctly. It’s not smarter than humans, but it also isn’t that much dumber (in this instance, anyway).

The difference is that the human came to their conclusion with active reasoning, but simply misheard the question, while the AI was aware of what was being asked, but lacks the ability to reason, so it’s unable to give any answer besides one already given by a real person answering a slightly different question somewhere in its training data.

A human who says “neither” would say that because they’ve heard this question before and assumed it was the same.

That’s the difference. They made an assumption. This did not. It’s just the most likely text to follow the former text. It’s not a bad assumption. That requires thinking about it. It’s just a wrong result from a prediction machine.

Right, but I’m saying that the process that the human is using here is actually not that different from what the AI is doing. People would misread the passage because they expect the number 20 to be followed by the word “pounds” based on their previous encounters with similar texts.

But what we’re saying is that the process is totally different - it’s only the result that is similar. The AI isn’t “misreading” the question - it understands that it’s comparing pounds of bricks to a distinct number of feathers. The issue is that when it searches its database for answers to questions similar to the one it was asked, and sees that the answer was “they’re the same,” and incorrectly assumes that the answer is the same for this question. It’s a fundamental problem with the way AI works, that can’t be solved with a simple correction about how it’s interpreting the question the way a human misreading the question could be.