There’s a meme going around that an Open Source project “can’t” prevent LLM use by contributors because there’s no technical means to enforce this. This is idiotic and shows just how disingenuous slopmongers will be when told they can’t just submit slop.

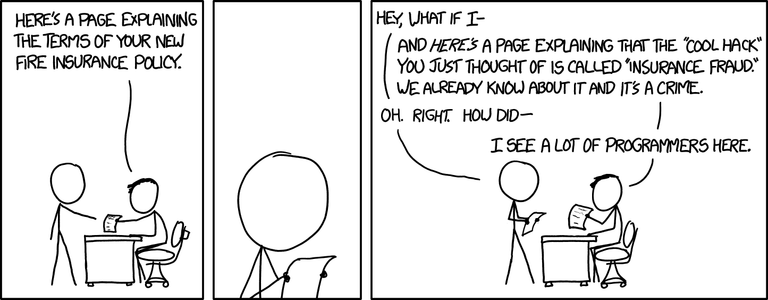

Did you know there’s also no technical means to enforce that you didn’t copy some code you’re contributing from a proprietary codebase and say it’s original work? Somehow we haven’t given up on that!