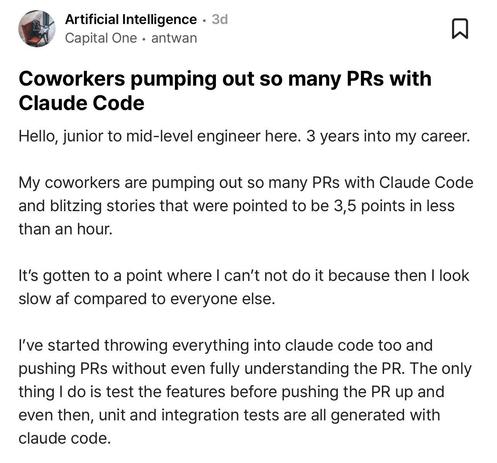

A developer on Blind talks about how their coworkers now ship features in an hour that used to take days (3 or 5 story points) thanks to Claude Code.

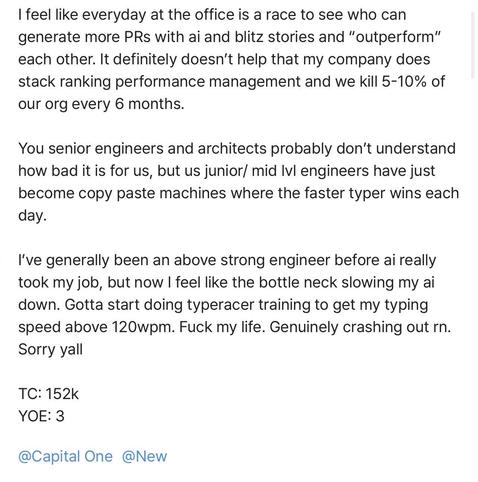

This has created competitive pressure to ship features so quickly that they don’t even take time to understand the code and feel they are now the bottleneck in the way of the AI.

There is going to be interesting fallout across the industry as companies adjust to the reality that execution is essentially “free” (if you can afford the tokens).