@aesthetikx personally i would consider non-support of ollama to be a positive feature of freebsd, but that's just me

@benjamineskola I suppose I can't disagree with you. I am also concerned to see that the project now supports 'cloud' models, rather than just local inference.

@aesthetikx Is local inference actually any better? Seems to me like it has most of the same problems.

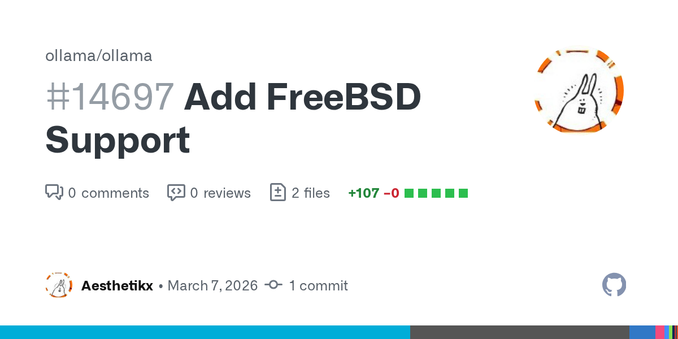

@aesthetikx While I am quite against AI on the “AI Bubble/gafam level, and all the social consequences, I can see some uses at a local, individual level, and offline initiatives like ollama. They can be useful and give some draft to a clean human brain work. Would be great to have them usable from FreeBSD.

(But I would rage against anyone using AI to commit some code in the FreeBSD project. Because we don’t deserve shit to be inserted in the tree. Shit on the branches of a tree and you might expect gravity to return the present to yourself)

@EnigmaRotor Agreed. My use case is to summarize and tag bookmarks for local indexing, which even a low-grade open weight LLM is sufficient for.

cc: @feld