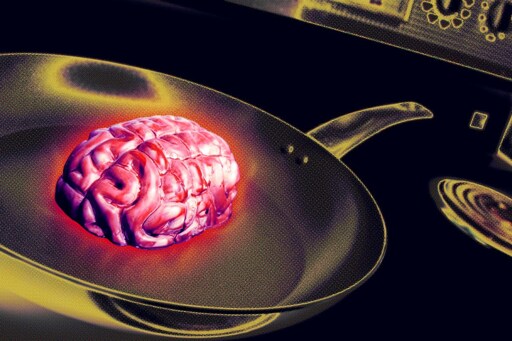

AI Use at Work Is Causing "Brain Fry," Researchers Find, Especially Among High Performers

Interesting article.

I would share this with my colleagues on our ‘AI Discussions’ channel. But I know what the result will be. “Those people just aren’t using the agents correctly”, “they need to provide the agents with moar context!!1!”, “this article is bad because I don’t like what it says”, “those respondents are just lazy or stupid”.

Personally, I’ve noticed this kind of mental exhaustion myself. I’ve tried leaning more heavily into AI usage because my employer encourages it. But it’s usually so damn frustrating.

I’ve found even the better/cutting edge LLMs struggle with basic troubleshooting, even when you provide them with solid context and try to keep the scope limited. Half the time they do great, but the other half they fail pretty spectacularly, and I end up wasting time trying to police/hand-hold them.

And I can’t even rely on these LLMs to reliably perform more menial tasks like formatting CSV data into JSON. They usually just stop the conversion after some arbitrary point, or they fuck up the structure of the output. Again, no matter how much context it detail I provide them.

These are all things some of my colleagues have found as well. Meanwhile, I’m also seeing other people become overly reliant on LLMs/agents, and accept whatever slop they produce as gospel while claiming it as their own work.

And that’s not even covering the knowledge/skill atrophy that I’ve witnessed. A lot of people learn and hone skills through repetition. But overuse of AI kills that opportunity, while offering unreliable immediate results.

I can’t find the article, but this was the paper: arxiv.org/abs/2602.11988.

I just wanted to point out that the myriad of “best practice” articles and information on AI are not very rigorous. So, not even necessarily an argument against AI (the paper sure isn’t). Even then it got pushback.

Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?

A widespread practice in software development is to tailor coding agents to repositories using context files, such as AGENTS.md, by either manually or automatically generating them. Although this practice is strongly encouraged by agent developers, there is currently no rigorous investigation into whether such context files are actually effective for real-world tasks. In this work, we study this question and evaluate coding agents' task completion performance in two complementary settings: established SWE-bench tasks from popular repositories, with LLM-generated context files following agent-developer recommendations, and a novel collection of issues from repositories containing developer-committed context files. Across multiple coding agents and LLMs, we find that context files tend to reduce task success rates compared to providing no repository context, while also increasing inference cost by over 20%. Behaviorally, both LLM-generated and developer-provided context files encourage broader exploration (e.g., more thorough testing and file traversal), and coding agents tend to respect their instructions. Ultimately, we conclude that unnecessary requirements from context files make tasks harder, and human-written context files should describe only minimal requirements.