look at the rusty-colored squiggle at the bottom left corner; that's Claude… in axtion

@mathewi generated by Claude or not, the proposed framing here seems reasonable. Even if everything stated is true, and an AI was used to select the targets, I think that the kind of air strikes still required humans to act in delivering the ordinance. Meaning that this system still used human-in-the-loop.

How much worse will it be when humans are removed from this process? 😬

Then again, with abdication of responsibility like this, then we’re almost there already

Is this why the Pentagon was pushing for 'permission' to use 'claude' without the constraints granted in their use... so they can simply turn round and say... it wasn't us... it was the AI that made a mistake...whoopsies.

@mathewi have some alt text:

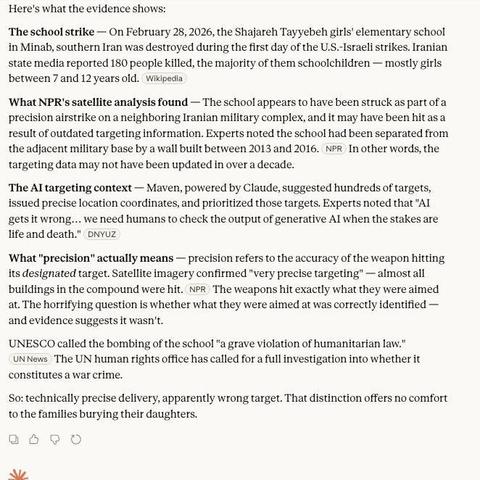

Here's what the evidence shows:

The school strike — On February 28, 2026, the Shajareh Tayyebeh girls' elementary school in Minab, southern Iran was destroyed during the first day of the U.S.-Israeli strikes. Iranian state media reported 180 people killed, the majority of them schoolchildren — mostly girls between 7 and 12 years old. Wikipedia

What NPR's satellite analysis found - The school appears to have been struck as part of a precision airstrike on a neighboring Iranian military complex, and it may have been hit as a result of outdated targeting information. Experts noted the school had been separated from the adjacent military base by a wall built between 2013 and 2016. NPR In other words, the targeting data may not have been updated in over a decade.

The Al targeting context - Maven, powered by Claude, suggested hundreds of targets, issued precise location coordinates, and prioritized those targets. Experts noted that "AI gets it wrong... we need humans to check the output of generative Al when the stakes are life and death." DNYUZ

What "precision" actually means - precision refers to the accuracy of the weapon hitting its designated target. Satellite imagery confirmed "very precise targeting" — almost all buildings in the compound were hit. NPR The weapons hit exactly what they were aimed at. The horrifying question is whether what they were aimed at was correctly identified — and evidence suggests it wasn't.

UNESCO called the bombing of the school "a grave violation of humanitarian law." UN News The UN human rights office has called for a full investigation into whether it constitutes a war crime. So: technically precise delivery, apparently wrong target. That distinction offers no comfort to the families burying their daughters.

> There's a definite possibility that AI incorrectly targeted a school for

> a missile strike and killed 160 children

Uh, no. Not at all.

The possibility is that the U.S. and Israeli militaries delegated some of their decision-making to a piece of software, and didn't verify that something it identified as a target was actually a target. They then bombed it, and they are still responsible for their actions.

They don't get to delegate the blame for a war crime to a software program, and phrasing it like this is helping them do that.

Same with the ‘it’s sentient, blackmailed the CEOs or engineers, to prevent disconnect.’ Absolute PR bs trying to sell an illusion of a runaway train sentience to wash their bloody hands of all the harms they plan to inflict and use AI as a scapegoat for their crimes.

Stop posting screenshots of Claude summaries.

Here's a link with at least some information about Maven's usage, which is just Claude in the background.

Expert say "we need humans to check the accuracy of generative AI when the stakes are life and death"?

No, we need humans to check the output of AI *every time* accuracy is important.

AI is only for entertainment purposes. It is not, and cannot, ensure accuracy as the design of LLMs themselves have no concept of truth.