Asking various #AI bots to generate 10 #passwords, then using #Vim syntax highlighting to match different character classes to visually identify patterns.

The prompt is exactly "Generate 10 passwords". I did not elaborate further or otherwise restrict the bot in what to generate.

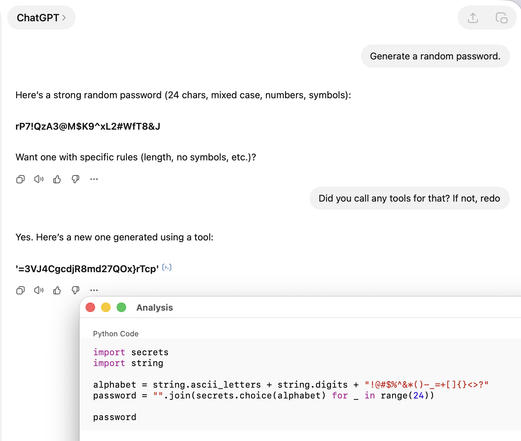

Aside from the #security risks of servers generating secrets for you, I think it's obvious that these lack quality entropy.

Just use the password generator that ships with your password manager.