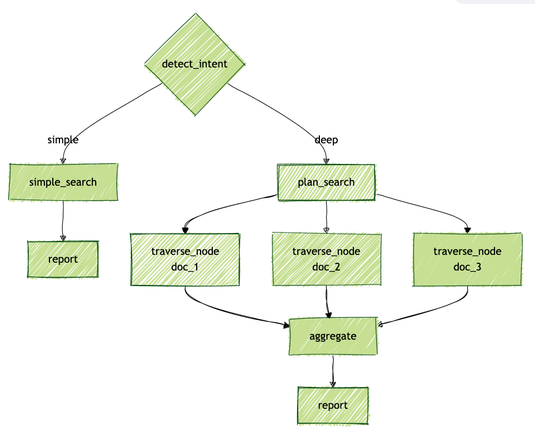

Been working through what "dynamic pipelines done right" looks like in practice.

The key insight: separate what the *framework* should control from what the *agent* should control.

In ZenML's dynamic pipelines, responsibilities split cleanly:

ZenML controls:

→ Fan-out (spawn N agents based on runtime data)

→ Budget and depth limits

→ Step orchestration and DAG construction

→ Artifact tracking and lineage

→ Failure handling per step (retries, fallbacks)