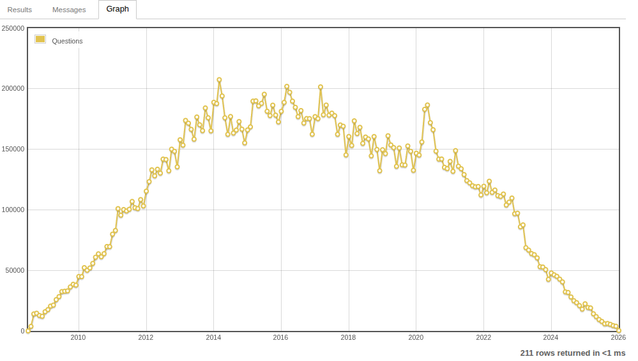

This chart shows the total number of Stack Overflow questions asked each month. As you can see, AI summaries in Google and AI coding tools have nearly killed the site. It is only a matter of time before the site shuts down completely. The golden age of independent news, blogs, forums, and specialized sites like Stack Overflow is over. Whether this is good or bad, only time will tell. Personally, I think we are now restricting all internet traffic to just a few Gen AI apps https://data.stackexchange.com/stackoverflow/query/1926661#graph

@nixCraft The problem is much much larger. All AI tools simply regurgitate what's already on the independent sites. If these sites shut down, there will be no more new information, and we will all be stuck in 2025 forever

@accountalreadyinuse @nixCraft You forget that there are lots of other sources of training info, like documentation, open-source code and closed-source code that they promise you that they are not using to train.

In fact, the main reason for me to improve the documentation of a library I maintain by adding lots and lots of examples is to improve AI results so people don't start asking "why calling .downloadToDisk() isn't working?" when downloadToDisk is a method that lots of AI tools bullshitted.

@qgustavor @accountalreadyinuse @nixCraft Problem is that a lot of those special pages will do everything to shut out any LLM reader sources

@gullevek @accountalreadyinuse @nixCraft Why they would? Other than, to prevent DDOSing? I assume everyone using GitHub (public or private) have their data scrapped, same for any other Git provider (even Codeberg, considering public repos) because how to block AI without blocking the

git CLI? As for documentation, if it's in a public git, it's game over.@qgustavor @accountalreadyinuse @nixCraft A lot of those pages like codeberg put things in place to stop LLM crawlers. And if more and more of the actual answers and code ends up behind locked doors the LLMs can’t do more than read the documentation. And they are already extremely bad at that.

@gullevek @accountalreadyinuse @nixCraft

You are wrong, it's not possible to stop AI crawlers on systems designed for automated operation (like git CLI, RSS/Atom). Techniques like trying to detect botting are easily circumvented. You can't add Anubis (or similar tools that add proof of work or even a captcha) because it would break the underlining protocol. So, assume anything on a public git is used for training, including documentation. Also, AI companies are pushing AI browsers which mess things up even more. Even Firefox! It's an arms race, sure, but public git repos, because its design, are just a way too easy targets.

You are wrong, it's not possible to stop AI crawlers on systems designed for automated operation (like git CLI, RSS/Atom). Techniques like trying to detect botting are easily circumvented. You can't add Anubis (or similar tools that add proof of work or even a captcha) because it would break the underlining protocol. So, assume anything on a public git is used for training, including documentation. Also, AI companies are pushing AI browsers which mess things up even more. Even Firefox! It's an arms race, sure, but public git repos, because its design, are just a way too easy targets.

@qgustavor @accountalreadyinuse @nixCraft I am sure there will be the end of some public repos. As sad as it sounds