I finally got the data transfer from the AISC110C high-speed camera sensor working!

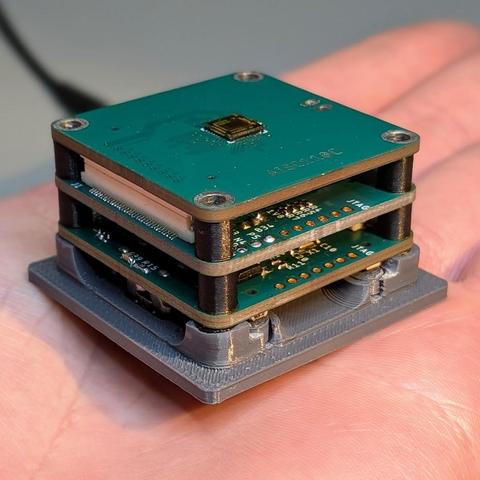

It's a 5€ chip that outputs 80x120 video at up to 40k fps.

Data is read with a Xilinx Spartan7 and transmitted via USB3 with a Cypress FX3, each on its own little PCB.

The front PCB is exchangeable, making this a neat modular platform. I already have an analog video frontend with the ADV7182 and am working on a Cameralink interface.

Videos are coming tomorrow, I need more light for the high framerates.