🧵

🧵

#39c3

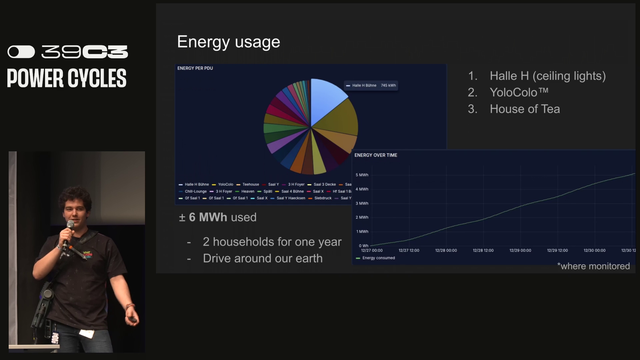

The congress used +/- 6Mwh of power (for the monitored usage) and the following used the most power:

1) Halle H (ceiling lights)

2) YoloColo

3) House of Tea

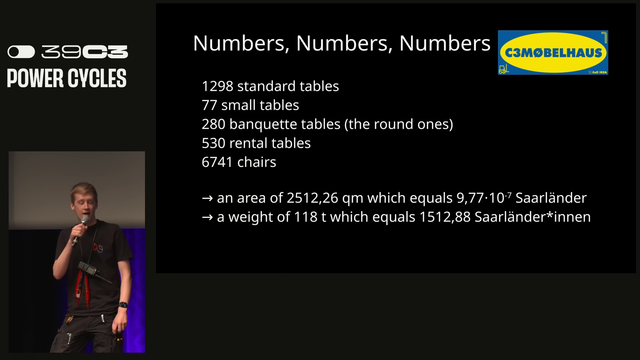

c3mobelhaus deployed:

* 1298 standard tables

* 77 small tables

* 280 banquette tables

* 530 rentable tables

* 6741 chais

All of this weighed 118 Tonnes. #39c3

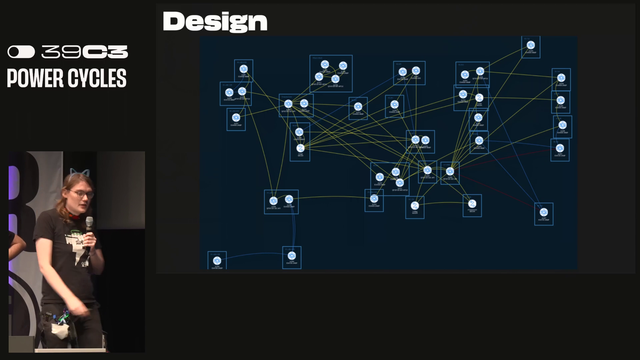

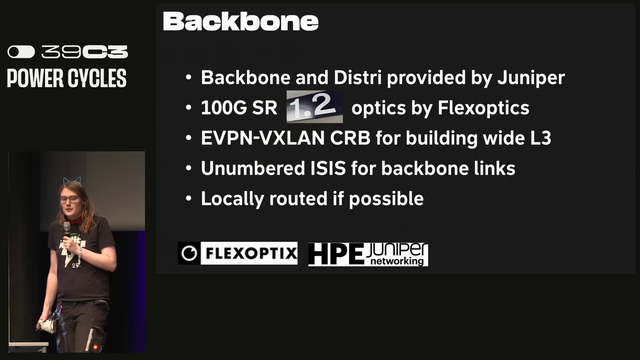

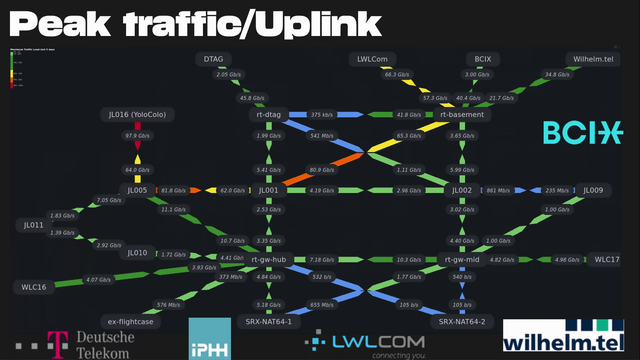

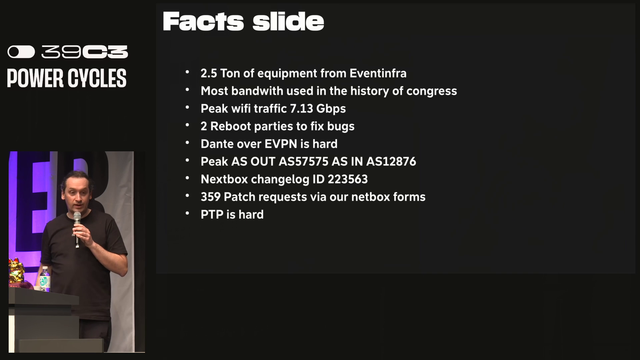

All of these links are 100Gbps. Backbone and distribution was done by Juniper. The optics were provided by Flex Optics. EVPN-VXLAN CRB for building wide L3. #39c3

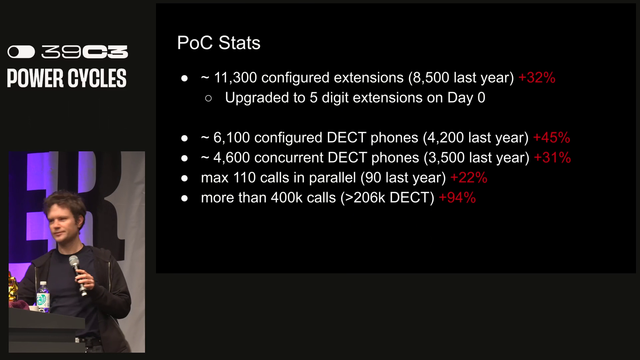

Next up is the Phone Operations Center (POC). They deployed:

* ~ 11 300 configured extenstions (8500 last year), increasing by more than 32%

* 6 100 configured DECT phones

* 4 600 concurrent DECT phones

* Max 110 calls in parallel

* More than 400k calls were made (>206k DECT)

A problem this year was SIP war dialing. In previous years, the team saw random war dialing but this year was a larger challenge. Rate limiting in the future may be implemented.

They handed out 171 phones on loan to orgranizers. There is an eSIM provisioning service and as well as spanish and polish as additional languages. The following was deployed:

* 70 SIP Phones

* 75 DECT Antennas

* 7 EPDDI Antennas

Operational stats:

* 2331 SIM Cards (902 Physical and 1429 eSIMS)

* 1424 concurrently "active" devices

* 11967 calls

* 9007 SMSs

* 2 OPEN5Gs crashes - massive decrease from last year. #39c3

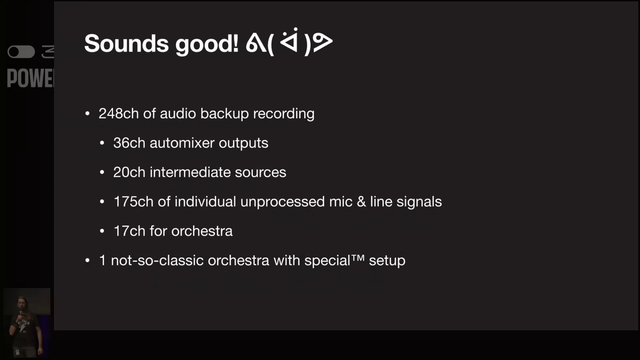

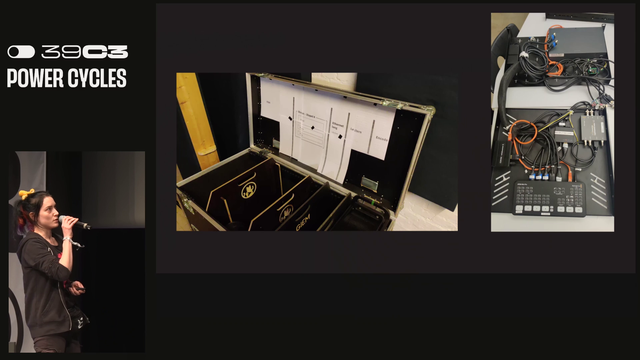

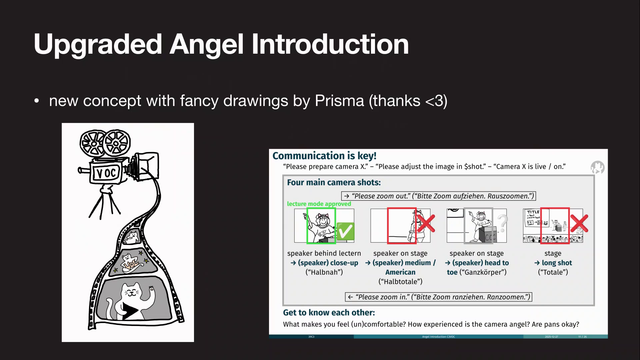

Next up in the Video Operations Center (VOC) or the vertical operations center 😂

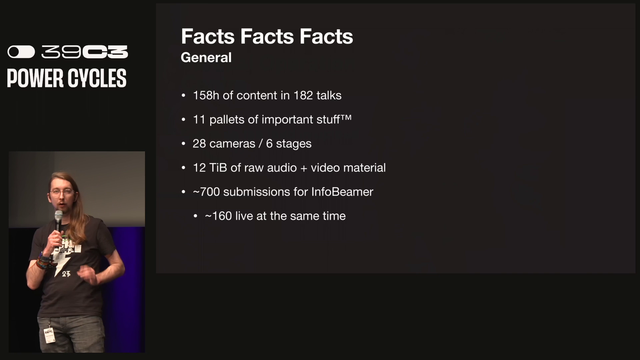

Stats:

* 154h of content in 182 talks

* 11 Pallets of important stuff

* 28 cameras / 6 stages

* 12 TiB of raw audio + video material

* ~700 Submissions for infobeamer content. #39c3

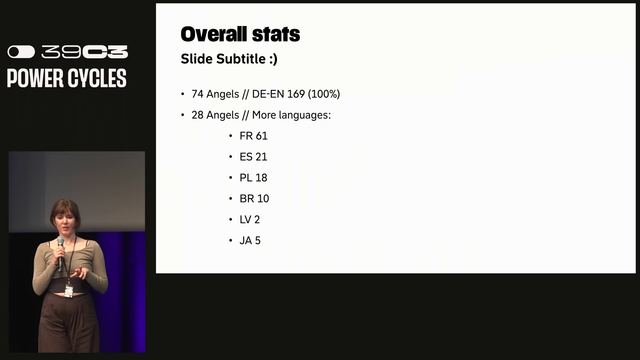

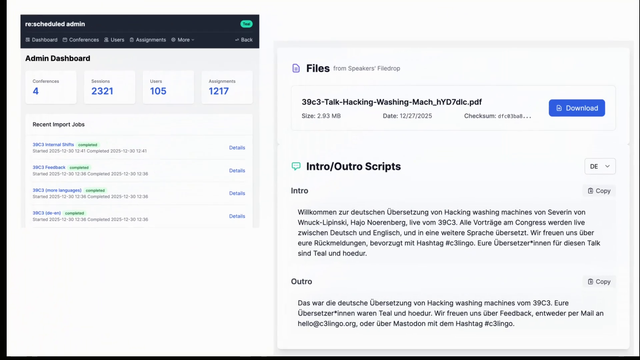

Next up is the C3 lingo team who does the translations of the event.

74 Angels translated German to English

28 Angels translated various languages (French, etc). #39c3

@jarednaude They mentioned a more in-depth talk concerning their automation stack. Any clues where I could find that? Would be highly interested.