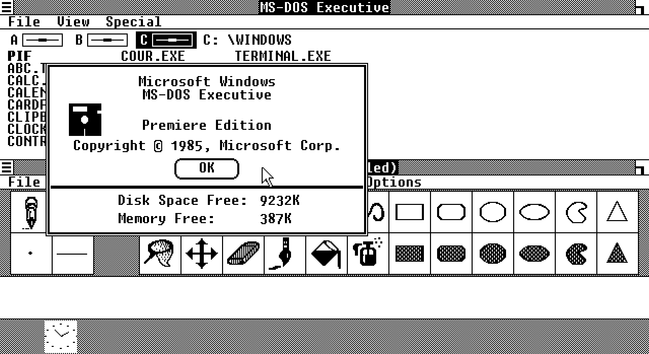

I discovered a wonderful hack that likely would allow me to run Windows 2 on my vintage Apricot PC Xi before the New Year.

Quick recap: Apricot PC is a British computer from 1983, not compatible with the IBM PC. It had a Windows 1 port, but not Windows 2, and thus couldn't run Word, Excel, or Illustrator. With a bit of driver-writing, I managed to start Windows 2 on it, but my video driver is rudimentary and cannot be used for practical purposes. Windows video drivers are super-complicated, so I was fully expecting to spend over a month writing one (at least there are docs for everything!)

But I just discovered a way to run Windows 2 with Windows 1 video drivers. So if I had a Windows 1 driver for Apricot, I could use it in Windows 2. Of course, it's never that simple...

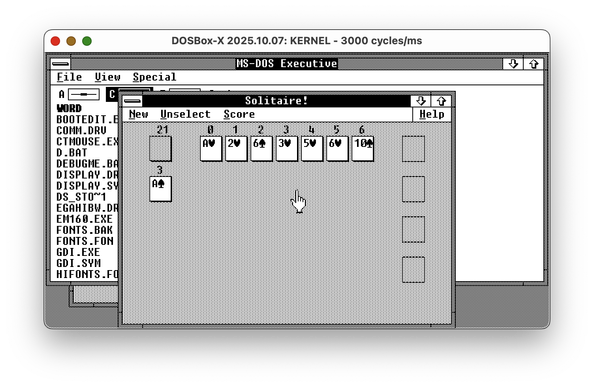

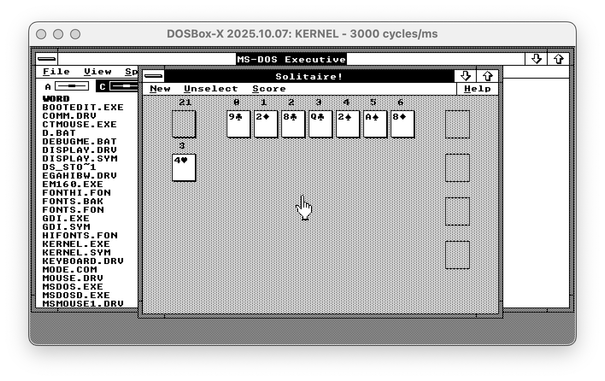

Find the difference between Windows 2 with Win1 driver and Windows 2 with the real Win2 driver - both are EGA 640x350!

🧵 thread with a few more screenshots and pointers