Finally tried gpt-5-mini on some tasks, hoping at "minimal" reasoning it would be faster for user-blocking inference than gpt-4.1-mini or claude-haiku-4.5.

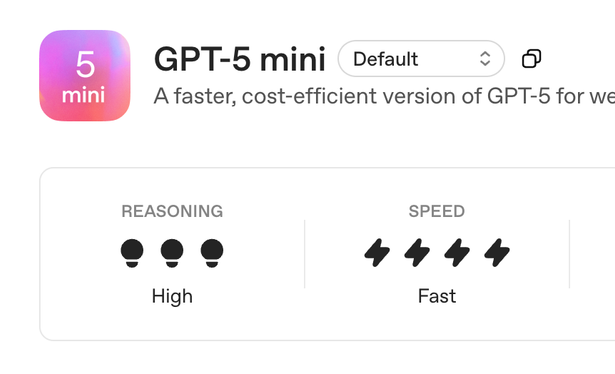

Nope, it's a dog. So much so that I asked two other teams, and they said 5-mini is so slow and unreliable that they can't use it either. Apparently OpenAI's rating of "4 lightning bolts" means "takes 5000-10000 ms to process 200 tokens" so that's good to know 💫