This will crash the global economy

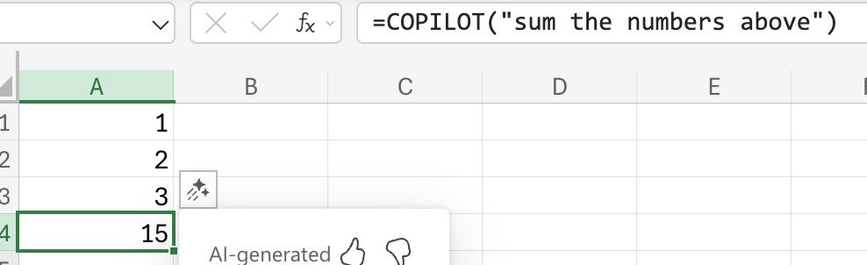

@eb It's always so baffling to me that people made computers do extremely complicated math and logic to give wrong results in simple arithmetic. And they sell that as a product that's supposed to be a great technological leap forward

@Kiloku @eb If you use a hammer to eat soup you generally don't end up with good results.

If you want to sum numbers use the sum function, not one that predicts the next most probable token. I dislike this type of bashing of LLMs because it's trivial to dismiss (ok they can't do trivial maths, but they can write an entire piece of software for me). There are much more risky outputs that could be used as an example. Funky Excel formulas have always existed...

If you want to sum numbers use the sum function, not one that predicts the next most probable token. I dislike this type of bashing of LLMs because it's trivial to dismiss (ok they can't do trivial maths, but they can write an entire piece of software for me). There are much more risky outputs that could be used as an example. Funky Excel formulas have always existed...

@nicolaromano @Kiloku @eb thing is they can barely write working python scripts and webshit. And that's for the state of the art models...

@fcalva @nicolaromano @Kiloku @eb The frightening thing is that they are generating functional software at this point. Idk how much handholding they need in the present day (because I actually enjoy what I do and I have zero interest in crowdfunding the Torment Nexus) but it started polluting the tech-adjacent maker side of YouTube almost immediately so I've watched multiple people who clearly aren't experienced programmers, but are persistent enough to go back and forth with an earlier, jankier version of ChatGPT to eventually end up with a functional program, and I'm lead to believe that some of the more code-focused models/platofrms have gotten a lot less hand-holdy.

I'm just waiting to see how much more data breach coverage costs next time I renew my business insurance, lmao.

I'm just waiting to see how much more data breach coverage costs next time I renew my business insurance, lmao.

@gordoooo_z @fcalva @Kiloku @eb

Part of the danger is that writing syntactically correct programs is something that can be bolted onto the LLM output side, which makes it look much more competent than it actually is

Part of the danger is that writing syntactically correct programs is something that can be bolted onto the LLM output side, which makes it look much more competent than it actually is