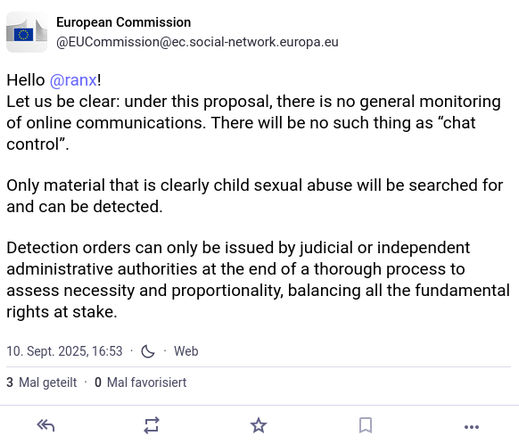

🇩🇪EU-Kommission jetzt ernsthaft: „Es gibt keine #Chatkontrolle.“

Ihre Fantasie: Ein magischer, 100% perfekter Algorithmus, der nur CSAM findet.

Die Realität: Um etwas zu finden, muss man alles scannen.

Stoppt die Massenüberwachungslüge! #StopScanningMe

https://ec.social-network.europa.eu/@EUCommission/115180569539039179